Were it merely the case that all Charles Stross was offering in his novel Accelerando was a kind of critique of contemporary economic trends veiled in an exquisitely Swiftian story the book would be interesting enough, but what he gives us transcends that. What it offers up is a model for how technological civilizations might evolve which manages to combine the views of several of his predecessors in a fascinating and unique way.

Underlying Stross’s novel is an idea of how technological civilizations develop known as the Kardashev scale. It is an idea put forward by the Russian physicists Nikolai Kardashev in the early 1960s. Kardashev postulated that civilizations go through different technological phases based on their capacity to tap energy resources. A Type I civilization is able to tap the equivalent of the solar radiation present its home planet, and he thought that civilization as of 1964 had reached that level. A Type II civilization in his scheme is able to tap an amount of energy equivalent to the amount put out by its parent star, and a Type III civilization able to tap the energy equivalent to its entire galaxy. Type IV and Type V civilizations able to tap the energy of the entire universe or even multiverse have been speculated upon that would transcend even the scope of Kardashev’s broad vision. Civilizations of this scale and power would indeed be little different from gods, and in fact would be more powerful than any god human beings have ever imagined.

Kardashev lays most of his argument out in an article On the Inevitability and Possible Structures of Supercivilizations. It is a fascinating piece, and I encourage you to follow the link and check it out. The article was published in 1984, a poignant year given Orwell’s dystopia, and at the apex of the Second Cold War, with tensions running high between the superpowers. Kardashev, of course, has no idea that within a few short years the Soviet Empire will be no more. Beneath his essay one can find lurking certain Marxist assumptions about technological capacity and the cult of bigness. He seems to think that the dynamic of civilization will require bigger and bigger solutions to problems, and that there is no natural limit to how big such solutions could become. Technological civilizations could expand indefinitely and would re-engineer the solar system, galaxy, or even the universe to their purposes.

Yet, this “bigger is better” ideology is just that, an ideology, not a truth. It is the ideology that led the Soviets to pump out more and more steel without asking themselves “steel for what?” The idea of throwing more and more resources at a problem might have saved Russia during the Second World War, but in its aftermath it resulted in an extremely complex and inefficient machine that was beyond the capacity of intelligent direction, which ultimately proved itself incapable of providing a standard of living on par with the West. We are, thankfully, no longer enthralled to such gigantism.

Stross, for his part, does not challenge these assumptions, but rather build’s his story upon them. Three other ideas serve as the prominent backdrop of the story: Dyson Sphere’s, Matrioshka Brains, and the Singularity. Let me take each in turn.

In Accelerando, as human civilization rapidly advances towards the Singularity it deconstructs the inner planets and constructs a series of spheres around the sun in order to capture all of the sun’s energy. These, so called, Dyson Sphere’s are an idea Stross borrows from the physicist Freeman Dyson, an idea that Kardashev directly cites in his On the Inevitability and Possible Structures of Supercivilizations. Dyson developed his idea back in 1960 in his article Search for Artificial Stellar Sources of Infra-Red Radiation, which proposed 24 years before Kardashev, that one of the best ways to find extraterrestrial intelligence would be to look for signs that solar systems had undergone similar sorts of engineering. Dyson himself found the inspiration for his sphere’s in Olaf Stapledon’s brilliant 1937 novel Star Maker, which was one of the first novels to tackle the question of the evolution of technological society and the universe.

A second major idea that serves as a backdrop of Stross’s novel is that of a Matrioshka Brain. This was an idea proposed by the computer scientist and longevity proponent, Robert Bradbury, who in sad irony, died in 2011 at the early age of 54. It is also rather telling and tragic that in light of his dream of eventually uploading his mind into the eternal electronic cloud, all of the links I could find to his former longevity focused entity Aeiveos appear to be dead links, seeming evidence that our personhood really does remain embodied and disappears with the end of the body.

Matrioshka Brains builds off of the idea of Dyson Spheres, but while the point of the latter is to extract energy the point of the former is to act as vast spheres of computation nestled one inside the other like the Russian dolls after which the Matrioshka Brain is named. In Accelerando, human-machine civilization has deconstructed the inner planets not just to capture energy, but to serve as computers of massive scale.

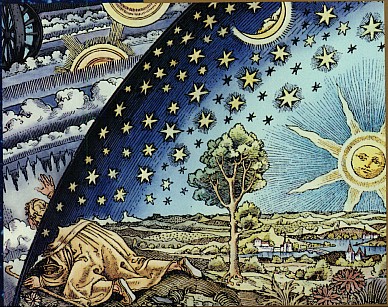

Both of these ideas, Dyson Sphere’s and Matrioshka Brain put me in mind of the idea of the crystal spheres which the ancients imagined surrounded and circled the earth and held the planets and stars. It would be the greatest of ironies if the very science which had been born when men such as Copernicus, Kepler, and Galileo overthrew this conception of the cosmos gave rise to an engineered solar system that resembled it.

The major backdrop of Accelerando is, of course, the movement of human begun technological civilization towards the Singularity. In essence the idea of the Singularity is that at some point the intelligence of machines that originated with human technological civilization will eventually exceed human intelligence. Just as human beings were able to design machines that were smarter than themselves, machines will be able to design machines smarter than themselves, and this process will accelerate to an increasing degree with the time between the creation of one level of intelligence and the next falling to shorter and shorter intervals. At some point the reality that emerges from this growth of intelligence becomes unimaginable to current human intelligence- like a slug trying to understand humanity and its civilization. This is the point of the singularity- an idea Vernor Vinge in his 1993 article The Coming Technological Singularity: How to Survive in the Post-Human Era, borrowed from the physics of black holes. It is the point over the event horizon over which no information can pass.

If you follow any link in this article I would highly recommend that you read Vinge’s piece, for unlike the optimist Ray Kurzweil, Vinge is fully conscious of the existential risks that the Singularity poses and the philosophical questions it raises.

Stross’s novel, in its own wonderful way, also raises, but does not grapple, with these risks and questions. They remain for us to think our way through before our thinking is done for us.

Rick,

Thanks for this exceptionally well-written review of Accelerando. You make an excellent case for reading the novel by Charles Stross, and your thoughts regarding the foundations for the work make the suggestion to read it even more appealing.

My own interests are clearly in the realm of “…the existential risks that the Singularity poses and the philosophical questions it raises.” The whole matter of achieving technologically, the goal of manufacturing a device or machine capable of intelligence which exceeds that of humanity, while still the stuff of science fiction in my view, is at the heart of the current endeavors and research in both philosophy and neuroscience. I wrote a blog entry some time ago on this subject, and was delighted to receive a response from a neuroscience researcher at the University of London. Our spirited discussion in the comments section was perhaps the best such response I have ever received:

http://jjhiii24.wordpress.com/2011/06/01/162/

From my point-of-view, the term “artificial intelligence” has always seemed a bit like an oxymoron. However one wishes to define “intelligence,” the human variety consists of so many mitigating factors honed over millions of years of evolution, that to suggest we might be able to replicate it artificially to the point of exceeding our own pushes the boundaries of credulity.

We may develop technologies which create capacities for “data processing” far exceeding those of the human brain to be sure, and while great accomplishments may follow as a consequence of our efforts in the technological realm, whatever the results of such efforts might be, it seems disproportionately optimistic to think that they will produce something so profoundly transcendent as the human soul or spirit, which I believe is foundational to our very human nature, and of human consciousness itself.

I appreciate the difficulty for those not inclined to investigate the ineffable aspects of our humanity, to even entertain the thought of “unseen forces or energies,” as being a realistic component of the human variety of intelligence, but I do fear for future generations who may well have to face the dangers suggested by the supercomputer “Hal,” in the Stanley Kubrick film “2001: A Space Odyssey.” I suspect that when our technology begins to exceed our collective wisdom as humans, there may be consequences beyond our ability to correct by the time we realize what we have done.

Hello John,

Thank you again for your complement and your comments, and thanks for the links to those great posts.

Again, I am in complete agreement with you on the essential points of the matter, although my perspective is perhaps somewhat different.

I think proponents of the singularity are widely optimistic in how difficult, and perhaps impossible, it would be to replicate anything like human consciousness on a machine rather than just create devices that mimic such intelligence. Such an artificial intelligence may be the holy grail of AI research, but I think the project will likely prove so resource intensive, and ultimately unnecessary, that AI research will give rise to a form of intelligence much different from the human sort, which echoing what you have said: “ when our technology begins to exceed our collective wisdom as humans, there may be consequences beyond our ability to correct by the time we realize what we have done.”

I remember a comment by the philosopher Daniel Dennett that may illustrate my point. He said that while it may be technically feasible to reproduce all the intricate complexity of a living bird it might prove cost prohibitive and unnecessary. It might be as expensive to recreate in artificial form 1 living bird as it was to go to the moon, and why, after all, do we need to do this when we already have 747s?

I think AI is proving to be like that. We are creating these forms of intelligence that already inundate and transform our lives. As I’ve tried to illustrate in past posts:

Our machines already in some sense run our financial markets and challenge democratic governance:

We are increasing using them to fight our wars:

And they are increasing making human labor both of the manual and intellectual sort superfluous:

Machines are “writing” news articles and “doing” scientific research.

I sometimes wonder if what is needed today is a new conservative party. Not conservative in the traditional sense, but humanist in that its focus is on preserving the legacy and wellbeing of the species. Such a party would help to balance out some of the negative trends emerging in the technological arena.

On a more philosophical note, I am curious as to what your thoughts are on the extended mind movement in neuroscience that tries to get beyond locating the mind in the mere physical structure of the brain. I am not sure if this supports or challenges the hopes of transhumanists to relocate mind in the realm of machines.

I found this half of the article easier to understand than the first half, perhaps because I’d come across more of the ideas before.

I’m glad you say the issues are not grappled with in the novel, but instead left for us to think about. I prefer novels like that, and it definitely encouraged me to go out and find a copy.

I agree with you, Nicola, the best books are those that leave us with the most questions.

Sometimes intelligent machines and conscious machines are conflated.

I try to make an argument in my post:

That we will definitely be able to create machines that simulate and surpass human intelligence but that still these machines will not be conscious. Of course, some argue that if something behaves and acts in a way that is completely conscious that is sufficient. For that matter, how do you know that any of your friends are really conscious?

My argument is that consciousness, like life, is a dissipative structure. That means it is not static like a machine but dynamic. It constantly reforms and recreates itself in energy exchange with its environment. We might be able to create entities that are like this but at that point they may no longer really be machines.

I may be drawing too fine a line in the argument but I think consciousness is key and may eventually acquire power on its own.

I also came to what was for me a surprising conclusion that the purpose of life (and consciousness) is to break down the universe.In other words, consuming energy in greater and greater amounts is what life does so the overall trend must be to do more and more of it. This certainly does not agree with my political views which lean towards the left, but this falls in line completely with the themes of Accelerando and some of your other references about civilizations expanding, gathering around stars, and consuming them.

Hi James,

I highly recommend readers check out your great article.

I am going to ask a similar question on your blog as well: I completely agree that machines will have to have the life-like quality of self-replication before they can truly be said to constitute the next stage of evolution. Yet, I sometimes wonder if we are overestimating the value of human like consciousness.

A whole spate of recent neuroscience seems to be pointing towards the conclusion that self-consciousness is a mere epiphenomenon. Works such as Leonard Mlodinow’s: Subliminal. There is a revival of the unconscious going on and not in the Freudian sense, but in the sense that most of the things we do are really not under the control or direction of the conscious mind: such as driving, choosing a mate, walking, judging etc. Even the notion of free will has come under assault by recent research.

It seems too that life really doesn’t need consciousness to be successful. The next most successful species to human are the ants and termites. As E.O. Wilson points out in his new book The Social Conquest of Earth what all these species share is that they are Eusocial. In other words they are networked and share common “goals”. I see no reason why the “intelligent” machines we are creating couldn’t be our evolutionary replacement as long as they are self-replicating and have this Eusocial aspect. It would, I think, be a spiritual loss for the universe to have a semi-conscious species such as ourselves to be supplanted by non-conscious collection of species, but may be the next stage of evolution despite that loss.

[…] for this is the second time in a short period that I have run into the musings of the man, first in a post I wrote on a work of science-fiction, and now here. Be that as it may, Dyson’s article Our […]

[…] idea of civilizational development put forward by the Russian scientist Nikolai Kardashev. I’ll quote myself here, “… in the 1960 Kardashev postulated that civilizations go through different […]

[…] Then again, perhaps we’re on the very verge of finding other technological civilizations that either exist now or existed in the very recent past once we start to look in the proper way. That, I think, is the lesson we should take from last year’s observation by the Kepler space telescope of some very strange phenomenon circling a star between the constellations of Cygnus the Swan and Lyra. Initially caught by citizen scientists reviewing Kepler data, what stuck astronomers such as Tabetha Boyajian was the fact whatever it is they are looking at is distributed so widely, leading some to speculate that there is a remote possibility that this object might be some sort of Dyson Sphere. […]