“She was asked what she had learned from the Holocaust, and she said that 10 percent of any population is cruel, no matter what, and that 10 percent is merciful, no matter what, and that the remaining 80 percent could be moved in either direction.” Kurt Vonnegut

It seems certain that human beings need stories to live, and need to share some of these stories in order to coexist with one another. In our postmodern era these shared stories- meta-narratives- are passe, the voluntary suspension of disbelief has become impossible, the wizard behind the curtain has been unmasked. Today’s apparent true believers are instead almost cartoonish versions of the adherents of the fanatical belief systems, political ideologies and unquestionable cultural assumptions and prejudices of past eras. Not even their most vocal adherents really believe in them, except, perhaps, for those who put their faith in conspiracy theories, which at the root are little but the panicked to the point of derangement search for answers after realizing the world is a scam.

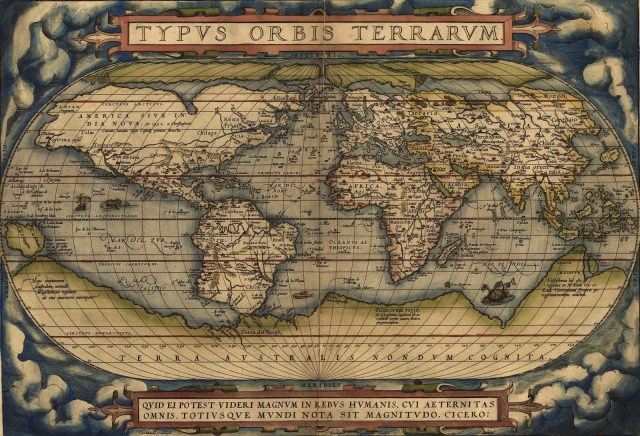

Yet just because we live in an age when all stories have an aura of fantasy doesn’t mean we’ve stopped making them, or even stopped looking for an overarching story that might explain to us our predicament and provide us with some guidance. The realization that the map is never the territory doesn’t imply that maps are useless, only that every map demands interrogation during its use and as a prerequisite to our trust.

I recently had the pleasure of picking up a fresh version of one of these meta-narratives or maps, a book by the astrobiologist Adam Frank called Light of the Stars: Alien Worlds and the Fate of the Earth. Frank’s viewpoint, I think, is a somewhat common one among secular, environmentally conscious persons. He thinks that we as a species have been going through the equivalent of adolescence, unless we learn to use our newly developed capacities wisely we’re doomed to a bad end.

The difference between Frank and others on this score is that he wants us to see this story in a cosmic context. We are, he argues, very unlikely to be the first species in the universe to experience growing up in this sense. Recent discoveries showing the ubiquity of planets, for Frank, puts the odds in favor of life, and even intelligence and technological civilization developing throughout the universe many times before

Using what we already know about earth and the thermodynamic costs of energy use Frank is able to create sophisticated mathematical models that show how technological civilizations can rise only to collapse due to the impact of energy use on their planetary environment, and why any technological civilization that survives will need to have found a way to align its system of energy use with the boundaries of its biosphere.

Frank’s is an interesting and certainly humane viewpoint, yet it leads to all sorts of questions. Does the idea of adolescence and maturation even make any sense when applied to our species? If anything, doesn’t history shows the development of civilization to be cyclical rather than progressive? To get a real sense of our predicament wouldn’t it be better to turn to the actual history of human societies rather than look to the fates of purely imagined alien civilizations?

Indeed, for a book on how our technological civilization can avoid what is an otherwise inevitable collapse Light of the Stars is surprisingly thin on the rise and fall of real human societies over the course of history. To the extent such history plays any role at all in Frank’s model it focuses on the kinds of outright collapse seen in places like Easter Island, which have recently become the focus of historians and anthropologists such as Jared Diamond.

By focusing on the binary division between extinction and redemption Frank’s is just one more voice urging us to “immanentize the eschaton”, but one can ask if what we face is less a sort of juncture between utopian or dystopian outcomes or more something like the rolling apocalypse of William Gibson’s “Jackpot”. That is, not the “utopia or oblivion” version of alternative futures that probably made sense during the mutually assured destruction madness of the Cold War, but the perhaps permanent end to the golden age climatic, technological and economic conditions of the past as bets on the human future that had been placed long ago draw dead.

Whiggish tales of perpetual progress we’re popular a few years ago, but have run into hard times of late, and for good reasons. Instead, we have the return of cyclical narratives, stories of rise and fall. The Age of Trump lends itself to comparison with the fall of Rome– a declining empire with a vain, corrupt, incompetent, and increasingly deranged leadership. Trump is like the love child of Nero and Caligula as someone joked on Twitter, which is both funny and disturbing because it’s true.

Personally I’m much more inclined towards these cyclical versions of history than I am linear ones, though admittedly this is some sort of deep seated cognitive bias for I tend to find cyclic cosmologies more intriguing as well. Unfortunately, there doesn’t seem to be any alternatives besides a history with a clear beginning, middle, and end and one that circles back upon itself. It may be a limit of culture, or human cognition rather than a true reflection of the world, but how to see beyond it in a way that doesn’t deny time and change entirely I cannot fathom.

These days it’s hard to mention cyclical history without being confused for a fanboy of Oswald Spengler and getting spammed by Jordan Peterson with invitations to join the Intellectual Dark Web. Nevertheless, there are good (and strange to say), progressive versions of such histories if you know where to find them.

A recent example of these is Bas Van Bavel’s The Invisible Hand? How Market Economies Have Emerged and Declined since AD 500. In a kind of modern version of the theory of societies transition from barbarism to decadence and finally back to barbarism by the 14th century Islamic scholar Ibn Khaldun, Bavel traces the way in which prosperous economies have time and time again been undone by elite capture. Every thriving economy eventually gives way to a revolt of the winners who use their wealth to influence politics in their favor in an effort to institutionalize their position. Eventually, this has the effect of undermining the very efficiency that had allowed the economy to prosper in the first place.

Though largely focused on the distant past, Bavel is clearly saying something about our own post-Keynesian era where plutocrats and predators use the state as a means of pursuing their own interest. And like most cyclical versions of history his view of our capacity to break free from this vicious cycle is deeply pessimistic.

“None of the different types of states or government systems in the long run was able to sustain or protect the relatively broad distribution of property and power found in these societies that became dominated by factor markets, for instance by devising redistributive mechanisms. Rather, in all these cases, the state increasingly came under the influence of those who benefited most from the market system and would resist redistribution.” (271)

How this elite capture of our politics will intersect with global climate change is anybody’s guess, right now it doesn’t look good, but as long as a large portion of this elite has its wealth tied up in the carbon economy, or worse, think their wealth somehow gives them an escape hatch from the earth’s environmental crisis, the move towards decarbonization will continue to be too little and too late.

One downside to cyclical theories of history, for me at least, is that far too often they become reduced to virtue politics. In a sort of inversion of the way old school liberal like Steven Pinker sees moderns as morally superior to people in the past, old school conservatives who by their nature are in thrall to their ancestors tend to view those who came before as better versions of ourselves.

Though it’s becoming increasingly difficult to argue that the time in which we live hasn’t produced a greater share of despicable characters than in times past, on reflection that’s very unlikely to be the case. What separates us from our ancestors isn’t their superior virtue, but the degree of autonomy and interdependence that makes such virtues necessary in the first place. A lack of autonomy along with the fact that each of us is now interchangeable with another of similar skills (of which there are many) is a reflection of our society’s complexity, and for this reason it’s the stories told about the unsustainability of this complexity that I find the most compelling.

The granddaddy of this view idea that it is unsustainable complexity which makes the fall of societies inevitable was certainly Joseph Tainter and his 1988 book The Collapse of Complex Societies. Tainter thought that societies begin to decline once they reach the point where increasing complexity only results in an ever thinner marginal return. Although, “fall” isn’t quite the right word here, rather, Tainter broke from the moralism that had colored prior histories of rise and fall. Instead, he viewed the move towards simplicity we characterize as decline as something natural, even good, just another stage in the life cycle of societies.

More recent works on decline due to complexity are perhaps not as non-judgemental as Tainter’s, but have something of his spirit nonetheless. There is James Bridle’s excellent book New Dark Age, Samo Burja’s idea of the importance of what he calls “intellectual dark matter” and the dangers of its decay. Outside of historians and social scientists, the video game developer Johnathan Blow has done some important work on the need to remove complexity from bloated systems, while the programmer Casey Murtori has been arguing for the need to simplify software.

To return to Adam Frank’s book- he’s right, the stories we tell ourselves are extremely important in that they serve as a guide to our actions. The dilemma is that we can never be sure if we’re telling ourselves the right one. The problem with either/or stories that focus on opposing outcomes- like human extinction/ or technologically enabled harmony with the biosphere- is that they’re likely focusing on the tails of the graph, whereas the meat lies in the middle with all the scenarios that exclude the two.

Within that graph on the negative side, though far short of species extinction, lies the possibility that we’ve reached a point of no return when it comes to climate change, not in terms of the need to decarbonize, but in Roy Scranton’s sense of having set in motion feedback loops which we will not be able to stop and that will make human civilization as currently constituted impossible. Also here would be found the possibility that we’ve reached a plateau of sustainable complexity for a civilization.

These two possibilities might be correlated. The tangled complexity of the carbon economy, including its political aspects, makes addressing climate change extremely difficult. To replace fossil fuels requires not just a new energy system, but new ways to grow our food, produce our chemicals, build our roads. It requires the deconstruction of vast industries that possess a huge amount of political power during precisely the time when wealth has seized control of politics, and the willful surrender of power and wealth by hydrocarbon states, including now, the most powerful country on the planet.

If being faced with a problem of seemingly intractable complexity is the story we tell ourselves, then we should probably start preparing for scenarios in which we fail to crack the code before the bomb goes off. That would mean planning for a humane retreat by simplifying and localizing whatever can be, increasing our capacity to aid one another across borders, including ways to absorb refugees fleeing deteriorating conditions, and making preparations to shorten as much as possible, any period of intellectual and scientific darkness and suffering that would occur in conditions of systemic breakdown.

Perhaps the most important story Frank provides is a way of getting ourselves out of an older one. Almost since the dawn of human space exploration science-fiction writers, and figures influenced by science-fiction such as Elon Musk, have been obsessed with- the Kardashev scale. This idea that technological civilizations can be grouped into types where the lowest tap the full energy of their home planet, the next up the energy of their sun, with the last using all the energy of their galaxy. It’s an idea that basically extends the industrial revolution into outer space and Frank will have none of it. A mature civilization, in his view, wouldn’t use all the energy of its biosphere because to do so would leave them without a world in which they could live. Instead, what he calls a Class 5 civilization would maximize the efficient use of energy for both itself and the rest of the living world. It’s an end state rather than a beginning, but perhaps we might have reached that destination without the painful, and increasingly unlikely, transition we will now need to make in order to do so.

There’s an interesting section in Light of the Stars where Frank discusses the possible energy paths to modernization. He doesn’t just list fossil fuels, but also hydro, wind, solar and nuclear and possible sources of energy a civilization could tap to become technological. I might have once wondered whether an industrial revolution was even possible had fossil fuels not allowed us to take the first step, but I didn’t need to wonder. The answer was yes, a green industrial revolution was at least possible. In fact, it almost happened.

No book has changed my understanding of the industrial revolution more than Andreas Malm’s Fossil Capital. There I learned that the early days of industrialization in the United Kingdom consisted of battle over whether water or coal would power the dawning age of machines, a battle water only just barely lost. What mattered in coal’s victory over water, according to Malm’s story, wasn’t so much coal’s superiority as a fuel source as it was the political economy of fossil fuels. The distributed nature of river to power water wheels left employers at a disadvantage to labor, whereas coal allowed factories to be concentrated in one place- cities- where labor was easy to find and thus easily dismissed. This is quite the opposite to what happened in the mines themselves where concentration in giving the working class access to vital choke points empowered labor, a situation that eventually led to coal being supplanted by oil, a form of energy impervious to national strikes.

But we probably shouldn’t take the idea of a green industrial revolution all that far. Water might have been capable of providing the same amount of energy for stationary machines as steam derived from burning coal, but it would not have had the same potential when it came to generating heat for locomotion or the generation of steel. At least not within the constraints of 19th century technology.

In another one of those strange, and all too common, mountains emerging from mole-hills moments of human history, it may have been a simple case of greed that birthed the industrial revolution. The greed of owners wanting to capture the maximum income from their workers drove them to choose coal as their source of power, a choice which soon birthed a whole, and otherwise unlikely, infrastructure for steel and the world shrinking machines built from it.

In other words, energy transitions are political and moral and have always been so. In a way looking to hypothetical civilizations in the cosmos that may have succeeded or failed in these transitions lends itself to ignoring these questions of values and politics at the core of our dilemma, and thus fails to provide the kind of map to the future Frank was hoping for. He assumes an already politically empowered “we” exists when in fact it is something that needs to be built in light of the very real divisions between countries and classes, the old and the young, humans and non-humans ,and even between those living in the present and those yet to be born.

The outcome of such a conflict isn’t really a matter of our species maturing, for history likely has no such telos, no set terminus or promised land to arrive at- only a perpetual rise and fall. Nonetheless, one might consider it to be a story, and there really are villians and heros in the tale. Whether that story will ultimately be deemed to have been a triumph, a tragedy, or more likely something in between, is a matter of which 10 percent all of us among the swayable 80 ultimately side for.