Perhaps the main problem with the case made by Pankaj Mishra in his Age of Anger is that it gives an outsized place to intellectuals and the ideas that inspire them, people and their works like Mishra and his books, and as consequence fails to bring to light the material forces that are such idea’s true source.

It’s one thing to be aware that today’s neo-liberalism, and the current populist revolt against them have roots stretching back to the Enlightenment and Rousseau’s revolt against it and to be made aware that there’s a contradiction at the heart of the Enlightenment project that has yet to be resolved. It’s quite another thing to puzzle out why even a likely doomed revolt against this project is taking place right now as opposed to a decade or even decades ago. To do that one needs to turn to insights from sociology and political economy, for if the crisis we are in is truly global- how is it so, and is it the same everywhere, or does it vary across regions?

The big trend that defines our age as much as any other is the growing littoralisation of human populations, and capital. In the developing world this means the creation of mega-cities. By 2050, 75 percent of humanity will be urbanized. India alone might have 6 cities with a population of over 10 million.

What’s driving littoralisation in the developing world? I won’t deny that part of mass migration to the cities can be explained by people seeking more opportunities for themselves and especially for their children. It’s also the case that globalization has compelled regions to specialize in the face of cheap food and goods from elsewhere and thus reduced the opportunities for employment. Yet perhaps one of the biggest, and least discussed, reasons for littoralization in the developing world is that huge tracts of land are being bought by often outside capitalists to set up massive plantations, industrial farms and mines.

It’s a process the urban sociologist Saskia Sassen describes in great detail in her book: Expulsions: Brutality and Complexity in the Global Economy where she writes:

A recent report from the Oakland Institute suggest that during 2009 alone, foreign investors acquired nearly 60 million hectares of land in Africa.

Further, Oxfam estimates that between 2008 and 2009, deals by foreign investors for agricultural land increased by 200 percent. (94-95)

I assume the spread of military grade satellite imaging will only make these kinds of massive purchases easier as companies and wealthy individuals are able to spot heretofore obscured investment opportunities in countries whose politicians can easily be bought, where the ability of the public to resist such purchases and minimal, and in an environment where developed world governments no longer administer any oversight on such activities.

For developing world states strong enough to constrain foreign capital these processes are often more internally than externally driven. Regardless, much of littoralization is driven the expulsion of the poor as the owning classes use their political influence to chase greater returns on capital often oblivious to the social consequences. In that sense it’s little different than the capitalism we’ve had since that system’s very beginnings, which, after all, began with the conquest of the New World, slavery, the dissolutions of the monasteries, and the enclosure movement.

What makes this current iteration of capitalism’s perennial features somewhat different is the role played by automation. I’ll get to that in a moment, but first it’s important to see how the same trend towards littoralisation seen in the developing world is playing out much differently in advanced economies.

Whereas the developing world is seeing the mass movement of people to the cities what the developing world is primarily experiencing is the movement of capital. Oddly, this has not meant that percentage of overall wealth has shifted to the coasts because at the same time capital is becoming concentrated in a few major cities those same cities are actually declining in their overall share of the population.

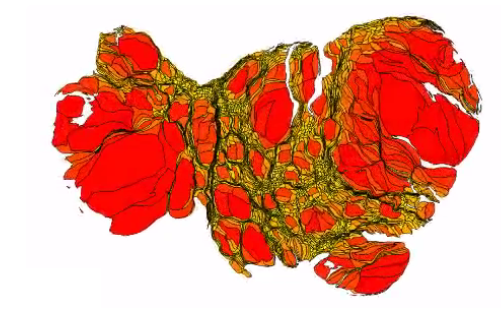

The biggest reason for this discrepancy appears to be the increasing price of real estate on the coast. Here’s what the US would look like if it was mapped by land values rather than area:

As in the case with the developing world much of the change in land values appears to be driven by investments by capital not located in the city, and in many instances located abroad.

In the developed world littoralisation has almost all been about capital. Though an increasing amount of wealth is becoming located in a few great cities, structural reasons are preventing people from being able to move there. Foreign money, much of it of nefarious origins has been pouring into global cities such as New York and London and driving up the cost of rent let alone property ownership. Often such properties are left empty while, as Tim Wu has pointed out, inflated property values have turned the most valuable real estate into something resembling ghost towns.

This is a world that in a strange way was anticipated by William Gibson in his novel The Peripheral where Gibson leveraged his knowledge of shady Russian real estate deals in London to imagine a future in which the rich actively interfere in the past of an Appalachian society in a state of collapse.

The evidence I have for this is merely anecdotal, but many of Dominicans who are newly arrived to small Pennsylvania cities such as Bethlehem and Lancaster are recent refugees from the skyrocketing rent of New York. If this observation is correct ethnic communities are being driven from large cities where wealth is increasing to interior regions with declining job prospects, which have not experienced mass immigration since the 1920’s. In other words we’ve set the stage for the rise of political nativist.

I said automation plays a role here that might make our capitalist era distinct from prior ones. The developed world has witnessed the hollowing out of the interior through automation before when farm machinery replaced the number of farmers required as a percentage of the population from 64 percent in 1850, to around 15 percent in 1950, to just two percent today. The difference is the decline of employment in agriculture occurred at the same time manufacturing employment was increasing and this manufacturing was much less concentrated, supporting a plethora of small and mid-sized cities in the nation’s interior, and much less dependent on high skills, than the capitalism built around the global city and high-end services we have today.

Automation in manufacturing has been decimating employment in that sector even after it was initially pummeled by globalization. Indeed, the Washington Post has charted how districts that went for Trump in the last election map almost perfectly where the per capita use of robots has increased.

Again speaking merely anecdotally, a number of the immigrants I know are employed in one of Amazon’s “fulfillment centers” (warehouses) in Pennsylvania. Such warehouses are among the most hyper-automated an AI directed businesses currently running at scale. It’s isn’t hard to see why the native middle class feels it is being crushed in a vice, and it’s been far too easy to mobilize human against human hate and deny- as Steven Mnuchin Trump’s Treasury Secretary recently did- that automation is even a problem.

These conditions are not limited to the US but likely played a role in the Brexit vote in the UK and are even more pronounced in France where a declining industrial interior is the source of the far-right Marine Le Pen’s base of support.

The decline of industrial employment has meant that employees have been pushed into much less remunerative (on account of being much less unionized) services, that is, if the dislocated are employed at all. This relocation to non-productive services might be one of the reasons why, despite the thrust of technology, overall labor productivity remains so anemic.

Yet, should the AI revolution live up to the hype we should witness the flood of robots into the services a move that will place yet larger downward pressure on wages in the developed world.

The situation for developing economies is even worse. If the economist Dani Rodrik is right developing economies are already suffering what he calls “premature de-industrialization” . The widespread application of robots threatens to make manufacturing in developed countries- sans workers– as cheap as products made by cheap labor in the developing world. Countries that have yet to industrialize will be barred from the development path followed by all societies since the industrial revolution, though perhaps labor in services will remain so cheap there that service sector automation does not take hold. My fear there is that instead of humans being replaced by robots central direction via directing and monitoring “apps” will turn human beings into something all too robot-like.

A world where employment opportunities are decreasing everywhere, but where population continues to grow in places where wealth has never, and now cannot accumulate, means a world of increased illegal migration and refugee flows- the very forces that enabled Brexit, propelled Trump to the White House, and might just leave Le Pen in charge of France.

The apparent victory of the Kushner over the Bannon faction in the Trump White House luckily saves us from the most vicious ways to respond to these trends. It also means that one of the largest forces behind these dislocations- namely the moguls (like Kushner himself) who run the international real estate market are now in charge of the country. My guess is that their “nationalism” will consist in gaining a level playing field for wealthy US institutions and individuals to invest abroad in the same way foreign players now do here. That, and that the US investors will no longer have their “hands-tied” by ethical standards investors from countries like China do not face, so that weak countries are even further prevented from erecting barriers against capital.

Still, should the Bannon faction really have fallen apart it will present an opportunity for the left to address these problems while avoiding the alt-right’s hyper-nationalistic solutions. Progressive solutions (at least in developed economies) might entail providing affordable housing for our cities, preventing shadow money from buying up real estate, unionizing services, recognizing and offsetting the cost to workers of automation. UBI should be part of that mix.

The situation is much more difficult for developing countries and there they will need to find their own, and quite country specific solutions. Advanced countries will need to help them as much (including helping them restore barriers against ravenous capital) as they can to manage their way into new forms of society, for the model of development that has run nearly two centuries now appears to be irrevocably broken.