We’ve got a huge problem on our hands which the 2016 election, along with Brexit, has not so much created as fully exposed. What we’ve witnessed is a kind of short-circuit between the three pillars that have defined our particular form of democratic liberalism over the last century. Democratic liberalism over the 20th and into the 21st century consisted of a kind of balance between the public at large, mass media, and policy elites with the link between the three being political representatives of one of the major parties. As idealized by public philosophers such as Walter Lippmann, the role of politicians was to choose among the policy options presented by experts and “sell” those policies to the public using the tools of mass communication to ensure their legitimacy.

The fact that such a balance became the ideal in the first place, let alone its inevitable failure, can only be grasped fully when one becomes familiar with its history.

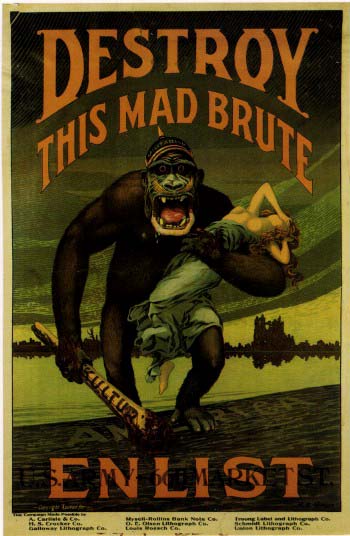

Non-print based mass media only became available during the course of the First World War and it was here that the potential of media such as film, radio, posters and billboards to create a truly emotionally and ideologically unified public became apparent- although the US had come close to this discovery a little over in a decade earlier in the form of mass circulation newspapers which were instrumental in getting the American public behind the Spanish- American War and that itself gave rise to real standards of objectivity in journalism.

During WWI it was the Americans and British who mastered the art of war propaganda transforming their enemies the Germans into savage “huns” and engendering a kind of will to sacrifice for what (at least for the Americans) was a distant and abstract cause. Lippmann himself was on the Creel Committee which launched this then new form of political propaganda. Hitler would write enviously of British and American propaganda in Mein Kampf, and both the Nazis and the Soviet would use the new media and the proof of concept offered by allied powers in the war, to form the basis of the totalitarian state. Those systems ultimately failed but their rise and attraction reveal the extent to which democracy, less than a century from our own time, was seen to be failing. Not just the victory of the Soviets in the war, but the way they were able to rapidly transform the Russian Empire from an agrarian backwater to an industrial and scientific powerhouse seemed to show that the future belonged to the system that most fully empowered its technocrats.

The Great Depression and Second World War would prove to be the golden age of experts in the West as well. In the US it was technocrats who crafted the response to the economic crisis, who managed the American economy during the war, who were responsible for technological breakthroughs such as atomic weapons, rockets capable of reaching space, and the first computers. It was policy experts who crafted novel responses to unprecedented political events such as the Marshall Plan and Containment.

Where the Western and Soviet view of the role of experts differed had less to do with their prominence and more to do with their plurality or lack of it. Whereas in the Soviet Union all experts were united under the umbrella of the Party, Western countries left the plurality of experts intact so that the bureaucrats who ran big business were distinct from the bureaucrats who ran government agencies and neither had any clear relationship to the parties that remained the source of mass political mobilization while the press remained free (if not free of elite assumptions and pressures) to forge the public’s interpretation of events as it liked.

Lippmann had hoped the revolutionary medium of his time- television- would finally provide a way for the technocrats he thought necessary to rule a society that had become too complex for the form of representative democracy that had preceded allowing experts to directly communicate with the public and in so doing forge consensus for elite policies. What dashed his hopes was a rigged game show.

The Quiz show scandal that broke in the 1950’s (it was made into an excellent movie in the 90’s) proved to Lippmann that American style television with its commercial pressures could not be the medium he had hoped for. In his essay, Television: whose creature, whose servant? Lippmann called for the creation of an American version of the BBC. (PBS would be created in 1970, as would NPR). Indeed, the scandal did drive the three major US television networks- especially CBS- towards the coverage of serious news and critical reporting. Such reporting helped erode political support for the Vietnam war, though not, as it’s often believed, by turning public opinion against the war, but as pointed out back in the 1980’s by Michael Mandelbaum in his essay Vietnam: The Television War by helping to mobilize such a vast number of opponents as to polarize the American public in a way that made sustaining the post-war consensus unsustainable. Vietnam was the first large scale failure of the technocrats- it would not be their last.

From the 1970’s until today this polarization was mined by a new entry on the media landscape- cable news- starting with Ted Turner and CNN. As Tim Wu lays out in his book The Master Switch, the rise of cable was in part enabled by Nixon’s mistrust of what was then “mainstream news” (Nixon helped deregulate cable). This rise (more accurately return) of partisan media occurred at the same time Noam Chomsky (owl of Minerva like) in his book Manufacturing Consent was arguing that the press was much less free and independent than it pretended to be. Instead it was wholly subservient to commercial influence and the groupthink of those posing to be experts. And hadn’t, after all, George Kennan, the brilliant mind behind containment and an unapologetic elitists compared American democracy to a monster with a brain the size of a pin?

Chomsky’s point held even in the era of cable news for there was a great deal of political diversity that fell outside the range between Fox News and CNN. Manufactured consent would fail, however, with the rise of the internet which would allow the cheap production and distribution of political speech in a way that had never been seen before, though there had been glimpses. Political speech was democratized at almost the exact same time trust in policy elites had collapsed. The reasons for such a collapse in trust aren’t hard to find.

American policy elites have embraced an economic agenda that has left working class income stagnant for over a generation. The globalization and de-unionization they promoted has played a large (though not the only) role in the decline of the middle class on which stable democracy depends. The Clinton machine bears a large responsibility for the left’s foolish embrace of this neoliberal agenda, which abandoned blue collar workers to transform the Democratic party into a vehicle for white collar professionals and identity groups.

Foreign policy elites along with an uncritical mainstream media led us into at least one disastrous and wholly unnecessary war in Iraq, a war whose consequences continue to be felt and which was exacerbated by yet more failure by these same elites. Our economic high-priests brought us the 2008 financial crisis the response to which has been a coup by the owning classes at the cost of trillions of dollars. As Trump’s “populist” revolt of Goldman Sachs alums demonstrates, the oligarchs now thoroughly control American government.

And it’s not only social science experts, politicians and journalist who have earned the public’s lack of trust. Science itself is in a crisis of gaming where it seems “results” matter much more than the truth. Corporations engage in deliberate disinformation, what Robert Proctor calls agnotology.

The three legs of Lippmann’s stool- policy experts, the media, and the public have collapsed as expertise has become corporatized and politicians have become beholden to those corporate interest, while at the same time political speech has escaped from anyone’s overt control. Trump seems to be the first political figure to have capitalized on this breakdown- a fact that does not bode well for democracy’s future.

Perhaps we should just call a spade a spade and abandon political representation and policy experts for government via electronic referendum. Yet, however much I love the idea of direct democracy, it seems highly unlikely that the sort of highly complex society we currently possess could survive absent the heavy input of experts– even in light of their very obvious flaws.

It’s just as possible that China where technocrats rule and political speech and activity is tightly controlled by leveraging the centralized nature of internet could be the real shape of the future. The current structure of internet which is controlled by only a handful of companies certainly makes the path to such a plutocratic censorship regime possible.

Returning to the work of Tim Wu, we can see the way in which communications empires have risen and fell over the course of the last century: we’ve had the telephone, film, radio, television and now the computer. In all cases with the noted exception of television new media have arisen in a decentralized fashion, merged into gigantic corporations such as Bell telephone, and then are later broken up or lose dominance to upstarts who have adopted new means of transmission or whole new types of media itself.

What perhaps makes our era different in a way Wu doesn’t explore is that for the first time diversity of content is occurring under conditions of concentrated ownership. Were only a handful of companies such as FaceBook and Google to pursue the task in earnest they could exercise nearly complete control over political speech and thus end the current era. Such rule need not be rapacious but instead represent a kind of despotic-liberalism that mobilizes public opinion behind policies many of us care about such as stemming global warming. It’s the kind of highly rational nightmare Malka Older imagined in her sci-fi thriller Infomacracy and Dave Eggers gave a darker hue in his book The Circle.

Hopefully liberalism itself in the form of constitutional protections of free speech will prevent us from going so far down this route. (Although the Courts appear to think that Google et. al’s right to police their platforms’ content is itself protected under the First Amendment.) How our long standing constitutional protections adapt to a world where “speech” can come in the form of bots which outnumber humans and foreign governments insert themselves into our elections is anybody’s guess.

The best alternative to either despotic-liberalism or chaos is to restore trust in policy elites by finding ways to make such elites more accountable and therefore trustworthy. We need to come up with new ways to combine the necessary input of real experts with the revolution in communications that has turned every citizen into a source of media. For failing to find a way to rebalance expertise and democratic governance would mean we either lose our democracy to flawed experts (as Plato would have wanted) or surrender to the chaos of an equally flawed and fickle, and now seemingly permanently Balkanized, public opinion.