Jeff Stibel is either a genius when it comes to titles, or has one hell of an editor. The name of his recent book Breakpoint: Why the web will implode, search will be obsolete, and everything you need to know about technology is in your brain was about as intriguing as I had found a title, at least since The Joys of X. In many ways, the book delivers on the promise of its title, making an incredibly compelling argument for how we should be looking at the trend lines in technology, a book which is chalk full of surprising and original observations. The problem is that the book then turns round to come up with almost the opposite conclusions one would expect. It wasn’t the Internet that imploded but my head.

Stibel’s argument in Breakpoint is that all throughout nature and neurology, economics and technology we see this common pattern of slow growth rising quickly to an exponential pace followed by a rapid plateau, a “breakpoint” at which the rate of increase collapses, or even a sharp decline occurs, and future growth slows to a snail’s pace. One might think such breakpoints were a bad thing for whatever it is undergoing them, and when they are followed by a crash they usually are, but in many cases it just ain’t so. When ant colonies undergo a breakpoint they are keeping themselves within a size that their pheromonal communication systems can handle. The human brain grows rapidly in connections between birth and five after which it loses a great deal of those connections through pruning- a process that allows the brain to discard useless information and solidify the types of knowledge it needs- such as the common language being spoken in its environment.

His thesis leads Stibel to all sorts of fascinating observations. Here are just a few: Precision takes a huge amount of energy, and human brains are error prone because they are trading this precision for efficiency. The example is mine, not Stibel’s, but it captures his point: if I did the math right, IBM’s Watson consumed about 4,000 times as much energy as its human opponents, and the machine, as impressive as it was, it couldn’t drive itself there, or get its kids to school that morning, or compose a love poem about Alex Trebek. It could only answer trivia questions.

Stibel points out how the energy consumption of computers and the web are approaching what are likely hard energy ceilings. Continuing on its current trajectory the Internet will consume, in relatively short order, 20% of the world energy, about as much as the percentage of calories that are needed to run the human brain. A prospect that makes the Internet’s growth rate under current conditions ultimately unsustainable runless we really are determined to fry ourselves with global warming.

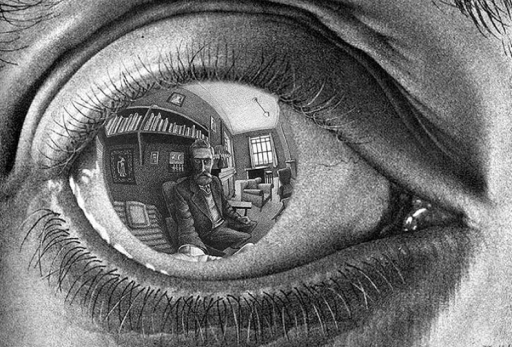

Indeed, this 20% mark seems to be a kind of boundary for intelligence, at least if the human brain is any indication. As always with, for me at least, new and surprising observations, Stibel points out how the human brain has been steadily shrinking and losing connections over time. Pound for pound, our modern brain is actually “dumber” than our cave man ancestors. (Not sure how this gels with the Flynn effect.) Big brains are expensive for bodies to maintain, and its caloric ravenousness relative to other essential bodily functions must not be favored by evolution otherwise we’d see more of our lopsided brain to body ratio in nature. As we’ve been able to offload functions to our tools and to our cultures, evolution has been shedding some of this cost in raw thinking prowess and slowly moving us back towards a more “natural” ratio.

If the Internet is going to survive it’s going to have to become more energy efficient as well. Stibel sees this already happening. Mobile has allowed targeted apps rather than websites to be the primary way we get information. Cloud computing allows computational prowess and memory to be distributed and brought together as needed. The need for increased efficiency, Stibel believes, will continue to change the nature of search too. Increasing personalization will allow for ever more targeted information, so that the individual can find just what they are looking for. This becoming “brainlike”, he speculates may actually result in the emergence of something like consciousness from the web.

It is on these last two points, on personalization, and the emergence of consciousness from the Internet that he lost me. Indeed, had Stibel held fast to his idea of the importance of breakpoints he may have seen both personalization and emergent consciousness from the Internet in a much different light.

The quote below captures Steibel’s view of personalization:

We’re moving towards search becoming a kind of personal assistant that knows an awful lot about you. As a side note, some of you may be feeling quite uncomfortable at this point with your new virtual friend. My advice: get used to it. The benefits will be worth it. As Kevin Kelly has said: “Total personalization in this new world will require total transparency. That is going to be the price. If you want to have total personalization, you have to be totally transparent. “ (93)

I suppose the question one should ask of Steibel is transparent to whom and for what? The answer, can be seen in the example of he gives of transparency in action:

Imagine that the Internet can read your thoughts. Your personal computer, now a personal assistant, knows you skipped breakfast, just as your brain knows you skipped breakfast. She also knows that you have back to back meetings, but that your schedule just cleared. So she offers the suggestion “It’s 11:00am and you should really eat before your next meeting. D’Amore’s Pizza Express can deliver to you within 25 minutes. Shall I order your favorite, a large thin crust pizza, light on the cheese with extra red pepper flakes on the side?” (97)

The answer, as Stibel’s example makes apparent, is that one is transparent to advertisers and for them. In the example of D’Amore’s”, what is presented as something that works for you is actually a device on loan to a restaurant- it is their “personal assistant”.

Transparent individuals become a kind of territory mined for resources by those capable of performing the data mining. For the individual being “mined” such extraction can be good or bad, and part of our problem, now and in the future, will be to give the individual the ability to control this mining and refract it in directions that better suit our interest. To decide for ourselves when it is good and we want its benefits ,and are therefore are willing to pay its costs, and when it is bad and we are not.

Stibel thinks personalization is part of the coming “obsolescence of search” and a response of the web to the need for increased efficiency as a way to avoid, for a time, reaching its breakpoint. Yet, looking at our digital data as a sort of contested territory gives us a different version of the web’s breakpoint than the one that Stibel gives us even if it flows naturally from his logic. The fact that corporations and other groups are attempting to court individuals on the basis of having gathered and analysed a host of intimate and not so intimate details on those individuals sparks all kinds of efforts to limit, protect, monopolize, subvert, or steal such information. This is the real “implosion” of the web.

We would do well to remember that the Internet really got its public start as a means of open exchange between scientists and academics, a community of common interest and mutual trust. Trust essentially entails the free flow of information- transparency- and as human beings we probably agree that transparency exists along a spectrum with more information provided to those closest to you and less the further out you go.

Reflecting its origins, the culture of the Internet in its initial years had this sense of widespread transparency and trust baked into our understanding of it. This period in Eden, even if it just imagined, could not last forever. It has been a long time since the Internet was a community of trust, and it can’t be, it’s just too damned big, even if it took a long time for us to realize this.

The scales have now fallen from our eyes, and we all know that the web has been a boon for all sorts of cyber-criminals and creeps and spooks, a theater of war between states. Recent events surrounding mass surveillance by state security services have amplified this cynicism and decline of trust. Trust, for humans, is like pheromones in Seibel’s ants- it gives the limits of how large a human community can be before breaking off to form a new one, unless some other way of keeping a community together is applied. So far, human societies have discovered three means of keeping societies that have grown beyond the capacity of circles of trust intact: ethnicity, religion and law.

Signs that trust has unraveled are not hard to find. There has been an incredible spike in interest in anti-transparent technologies with “crypto-parties” now being a phenomenon in tech circles. A lot of this interest is coming from private citizens, and sometimes, yes, criminals. Technologies that offer a bubble of protection for individuals against government and corporate snooping seem to be all the rage. Yet even more interest is coming from governments and businesses themselves. Some now seem to want exclusive powers to a “mining territory”- to spy on, and sometimes protect, their own citizens and customers in a domain with established borders. There are, in other words, splintering pressures building against the Internet, or, as Steven Levy stated there are increased rumblings of:

… a movement to balkanize the Internet—a long-standing effort that would potentially destroy the web itself. The basic notion is that the personal data of a nation’s citizens should be stored on servers within its borders. For some proponents of the idea it’s a form of protectionism, a prod for nationals to use local IT services. For others it’s a way to make it easier for a country to snoop on its own citizens. The idea never posed much of a threat, until the NSA leaks—and the fears of foreign surveillance they sparked—caused some countries to seriously pursue it. After learning that the NSA had bugged her, Brazilian president Dilma Rousseff began pushing a law requiring that the personal data of Brazilians be stored inside the country. Malaysia recently enacted a similar law, and India is also pursuing data protectionism.

As John Schinasi points out in his paper Practicing Privacy Online: Examining Data Protection Regulations Through Google’s Global Expansion, even before the Snowden revelations, which sparked the widespread breakdown of public trust, or even just heated public debate regarding such trust, there were huge differences between different regimes of trust on the Internet, with the US being an area where information was exchanged most freely and privacy against corporations considered contrary to the spirit of American capitalism.

Europe, on the other had, on account of its history, had in the early years of the Internet taken a different stand adhering to an EU directive that was deeply cognizant of the dangers of too much trust being granted to corporations and the state. The problem was this directive is so antiquated, dating from 1995, it not only failed to reflect the Internet as it has evolved, but severely compromised the way the Internet in Europe now works. The way the directive was implemented turned Europe into a patchwork quilt of privacy laws, which was onerous for American companies, but which they were able to often circumvent being largely self-policing in any case under the so-called Safe Harbor provisions.

Then there is the whole different ball game of China, which Schinasi characterizes as a place where the Internet is seen without apology or sense of limits by officialdom as a tool of for monitoring its own citizens placing huge restrictions on the extension of trust to entities beyond its borders. China under its current regime seems dedicated to carving out its own highly controlled space on the Internet a partnership between its Internet giants and its control freak government , something which we can hope the desire of such companies to go global might help eventually temper.

The US and Europe, in a process largely sparked by the Snowden revelations appear to be drifting apart. Just last week, on March 12, 2014 the European parliament by an overwhelming majority of 621 to 10 (I didn’t forget a zero), passed a law that aims at bringing some uniformity to the chaos of European privacy laws and that would severely restrict the way personal data is used and collected, essentially upending the American transparency model. (Snowden himself testified to the parliament by video link). The Safe Harbor provisions, while not yet abandoned ,as that would take a decision of the European Council rather than the parliament, have not been nixed, but given the broad support for the other changes are clearly in jeopardy. If these trends continue they would constitute something of a breaking apart and consolidation of the Internet- a sad end to the utopian hopes of a global and transparent society that sprung from the Internet’s birth.

Yet, if Steibel’s thesis about breakpoints is correct, it may also be part of a “natural” process. Where Steibel was really good was when it came to, well… ants. As he repeatedly shows, ants have this amazing capacity to know when their colony, their network, has grown too large and when it’s time to split up and send out a new queen. Human beings are really good at this formation into separate groups too. In fact as Mark Pagel points out in his Wired for Culture its one of the two things human beings are naturally wired to do: to form groups which breakup once they have exceeded the number of people that any one individual can know on a deep level- a number that remains even in the era of FaceBook “friends” right around where it was when we were setting out from Africa 60,000 years ago- about 150.

If we go by the example of ants and human beings the natural breakpoint(s) for the Internet is where bonds of trust become too loose. Where trust is absent, such as in large scale human societies, we have, as mentioned, come up with three major solutions of which only law, rather than ethnicity or religion, is applicable to the Internet.

What we are seeing- the Internet moving towards splitting itself off into rival spheres of trust, deception, protection and control. The only thing that could keep it together as a universal entity would be the adoption of global international law, as opposed to mere law within and between a limited number of countries, which regulated how the Internet is used, limited states from using the tools of cyber-espionage and what often amounts to the same thing cyber-war, international agreements on how exactly corporations could use customer information, and how citizens should be informed regarding the use of their data by companies and the state would all allow the universal promise of the Internet to survive. This would be the kind of “Magna Carta for the Internet” that Sir Tim Berners-Lee “the man who wrote the first draft of the first proposal for what would become the world wide web” is calling for with his Web We Want initiative.

If we get to the destination proposed by Berners-Lee our arrival might have been as much from the push of self-interest from multinational corporations as from the pull of noble efforts by defenders of the world’s civil liberties. For, it plausible that to the desire of Internet giants to be global companies may lead help spur the adoption of higher limits against government spying in the name of corporate protections against “industrial” espionage, protections that might intersect with the desire to protect global civil society as seen in the efforts of Berners-Lee and others and that will help establish a firmer ground for the protection of political freedom for individual citizens everywhere. We’ll probably need both push and pull to stem, let alone rollback, the current regime of mass surveillance we have allowed to be built around us.

Thus, those interested in political freedom should throw their support behind Berners-Lee’s efforts. The erection of a “territory” in which higher standards of data protection prevail, as seen in the current moves of the EU, at this juncture, isn’t contrary to a data regime such as that which Berners-Lee proposed where “Bill of Rights for the Internet” is adhered to, but helps this process along. By creating an alternative to the current transparency model being promoted by American corporations and abused by its security services, one which is embraced by Chinese state capitalism as a tool of the authoritarian state, the EU’s efforts, if successful, would offer a region where the privacy (including corporate privacy) necessary for political freedom continues to be held sacred and protected.

Even if efforts such as those of Berners-Lee to globalize these protections should fail, which sadly appears ultimately most likely, efforts such as those of the EU would create a bubble of protection- a 21st century version of the medieval fortress and city walls. We would do well to remember that underneath our definition of the law lies an understanding of law as a type of wall hence the fact that we can speak of both being “breached”. Law, like the rules of a sports game are simply a set of rules that are agreed to within a certain defined arena. The more bound the arena the easier it is to establish a set of clear defined and adhered to rules.

To return to Stiebel, all this has implications for the other idea he explored and about which I also have doubts- the emergence of consciousness from the Internet. As he states:

It took millions of years for human to gain intelligence, but it may only take a century for the Internet. The convergence of computer networks and neural networks is the key to creating real intelligence from artificial machines.

I largely agree with Steibel, especially when he echoes Dan Dennett in saying that artificial intelligence will be about as much like our human consciousness as the airplane is to a bird. Some similarities in terms of underlying principle, but huge differences in engineering and manifestation. Meaning the path to machine intelligence probably doesn’t lie in brute computational force tried since the 1950’s or the current obsession with reverse engineering the brain, but in networks. Thing is, I just wishes he had said “internets” as in the plural rather than “Internet” singular, or just “networks”, again plural. For my taste, Stiebel has a tone when he’s talking about the emergence of intelligence from the Internet that leans a little too closely to Teilhard de Chardin and his Noosphere or Kevin Kelly and his Technium, all of which could have been avoided had Steibel just stuck with the logic of his breakpoints.

Indeed, given the amount of space he had given to showing how anomalous our human intelligence was and how networks (ants and others) could show intelligent behavior without human type consciousness at all, I was left to wonder why our networks would ever become conscious in the way we are in the first place. If intelligence could emerge from networked computers as Stibel suggests it seems more likely to emerge from well bounded constellations of such computers rather than the network as a global whole- as in our current Internet. If the emergence of AI resembles, which is not the same as replicates, the evolution and principles of the brain it will probably require the same sorts of sharp boundaries we have, the pruning that takes place as we individualize, similar sorts of self-referential goals to ourselves, and some degree of opacity visa-vi other similar entities.

To be fair to Stibel, he admits that we may have already undergone the singularity in something like this sense. What he does not see is that ants or immune systems or economies give us alternative models of how something can be incredibly intelligent and complex but not conscious in the human sense- perhaps human type consciousness is a very strange anomaly rather than an almost pre-determined evolutionary path once the rising complexity train gains enough momentum. AI in this understanding would merely entail truly purposeful coordinated action and goal seeking by complex units, a dangerous situation indeed given that these large units will often be rivals, but one not existentially distinct from what human beings have known since we were advanced enough technologically to live in large cities or fight with massive armies or produce and trade with continent and world straddling corporations.

Be all that as it may, Stibel’s Breakpoint was still a fascinating read. He not only left me with lots of cool and bizarre tidbits about the world I had not known before, he gave me a new way to think about the old problem of whither our society was headed and if in fact we might be approaching limits to the development of civilization which the scientific and industrial revolution had seemed to suggest we had freed ourselves eternally from. Stibel’s breakpoints were another way for me to understand Joseph A. Tainter’s idea of how and why complex societies collapse and why such collapse should not of necessity fill us with pessimism and doom. Here’s me on Tainter:

The only long lasting solution Tainter sees for increasing marginal utility is for a society to become less complex that is less integrated more based on what can be provided locally than on sprawling networks and specialization. Tainter wanted to move us away from seeing the evolution of the Roman Empire into the feudal system as the “death” of a civilization. Rather, he sees the societies human beings have built to be extremely adaptable and resilient. When the problem of increasing complexity becomes impossible to solve societies move towards less complexity.

Exponential trends might not be leading us to a stark choice between global society and singularity or our own destruction. We might just be approaching some of Stibel’s breakpoints, and as long as we can keep your wits about us, and not act out of a heightened fear of losing dwindling advantages for us and ours, breakpoints aren’t all necessarily bad- and can even sometimes- be good.