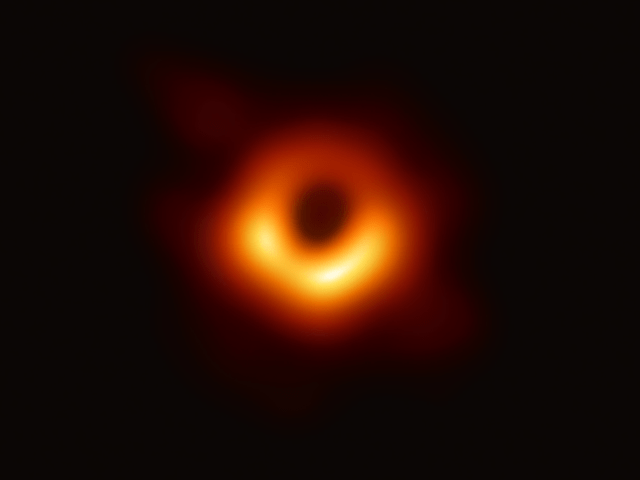

In homage to the international team of scientists working on the Event Horizon Telescope who earlier this month gave us the first ever image of a black hole, below is an essay I wrote for Harvard’s BHI essay competition late last year. The weird reality of the universe we call home never ceases humble and astound me, nor when working together as a species, does our ingenuity at uncovering the sublime order of the natural world cease to be a source of hope and pride.

____________________________

“Of all the conceptions of the human mind, from unicorns to gargoyles to the hydrogen bomb, the most fantastic, perhaps, is the black hole…” Kip Thorne

In the last century we’ve experienced an expansion of cosmic horizons that dwarfs any that occurred before. It’s a universe far larger and weirder than we could have imagined held together by dark matter and propelled apart by dark energy neither of which we truly understand. And out of all the things in this wide Alice in Wonderland universe nothing is as mind- blowingly weird as black holes.

Our expanded horizon has been made possible by a revolution in the tools used by astronomers: vastly improved optical telescopes and the birth of radio, gamma ray, infrared, x-ray astronomy. Only in the last few years have we seen the rise of astronomy based on gravitational waves, the ripples of space-time. Gravitational wave detection gives us the ability not to see, but in some sense, listen to black holes. [i]The question is how many of us will pay any attention to what they are saying?

For what is striking about this recent expansion of our cosmic horizons is how little it has impacted the world outside of science itself, the world of artists, authors, philosophers. [ii]

Certainly, the popularity of movies such as Interstellar, suggests a public hunger for the question begging awe implied by contemporary cosmology, but a comparison with the past reveals just how meh our reaction has been. One could lay blame for this disinterest on our selfie and celebrity based culture, but the problem goes deeper than that, and goes beyond the old complaint about the “two cultures”. [iii] What I think we’ve lost is our ability to experience the natural sublime.

The only expansion of human imagination in terms of our place in the universe that even comes close to the one we’ve experienced in the last century was the one that ended our pre-Copernican view of the world. When Copernicus and those who followed him gave us a new cosmos with the earth no longer at its center and threw the meaning of humanity into question. Yet these discoveries didn’t just circulate among early scientists, but quickly obsessed theologians, poets and philosophers who wrestled with what the new post-Copernican world meant for humanity’s place the universe.

Many of their reflections express not so much joy at our expanded horizons as a sentiment akin to vertigo, as if people on a previously stable earth had been turned upside down and they were in danger of plunging into a sky that had become an infinite pit. In other words, they were seriously weirded out.

Take the famous 18th century fable of Carazan’s Dream. Carazan, a miserly Baghdad merchant is tossed “up” into hell upon his death. He finds not tortures, but a journey through the infinite blackness of space. It is “A dreadful region of eternal silence, loneliness, and darkness” that envelopes Carzan as he loses sight of the illumination of the last stars. He is filled with unbearable anguish as he realizes that even after traveling “ten-thousand times a thousand years” his journey into blackness would not be at an end. [iv]

The 18th century poet and children’s writer Anna Laetitia Barbauld in her poem “A Summer Evening’s Meditation” expressed something similar.

What hand unseen

Impells me onward thro’ the glowing orbs

Of habitable nature, far remote,

To the dread confines of eternal night,

To solitudes of vast unpeopled space [v]

In the 18th century Edmund Burke termed this strange mixture of foreboding and awe the sublime. [vi] It’s an experience that comes not just from looking up into an infinite sky, but whenever one experiences nature in a way that puts our limited human scale and power in perspective. Immanuel Kant brought the sublime into the realm of mathematics. Noting that phenomena for which our everyday imagination showed itself to be woefully insufficient proved tractable with the use of mathematical thinking. Math was a tool for grabbing hold of the weird. [vii]

Another philosopher Arthur Schopenhauer went even deeper. For him, the experience of the sublime had nothing to do with fear or anxiety. Instead, it was the result of a kind of tension that emerged from contemplating the scale of nature relative to our own limits while simultaneously understanding it. Nature that seems so beyond us is instead in us, indeed is us. [viii]

It’s only a short step from this psychologizing of the sublime found in nature to seeing the sublime as originating in technology itself. For what made the natural sublime perceivable in the first place wasn’t so much the capabilities of the individual human mind as the whole history of science and technology up until that point, the knowledge that allowed us to finally apprehend just how large and weird our cosmic home actually was. Thus the natural sublime emerged in parallel with the idea of the technological sublime that would eventually swallow it. [ix]

Starting in the 19th century technology seemed to liberate us from nature. Our own creations became global in scope and were often looked upon with the same quasi-religious awe once limited to natural phenomenon. Yet after the world wars, the creation of nuclear weapons, and the growing environmental consciousness of the 20th century many non-scientists, with good reason, abandoned this idea of the technological sublime. Unfortunately, because the most powerful examples of the sublime in nature are only perceivable through the technology they distrusted, the natural sublime was rejected as well. [x]

Among the technologists of Silicon Valley and elsewhere belief in the technological sublime never really went away, and we’re still under its spell. Though the technological infrastructure we have built across and above the earth has been of great benefit to the material wellbeing of billions of people, culturally, that same structure has proven almost black hole like in its ability to torque our attention back upon ourselves. [xi] The most vocal proponents of the technological sublime use this very language. Our destiny is to enter the technological “singularity” and any attempts to see beyond it are futile. In a rejection of the universe Copernicus gave us, we’re back at the center of events. [xii]

Black holes seem almost ready made to provide a rejoinder to this kind of anthropocentric hubris, and if attended to, may even help us recover our sense of the natural sublime.

They dwarf anything created by human civilization in a way almost impossible to express with the mass of the largest discovered being 17 billion times larger than that of the sun. [xiii] It’s such scale that allows them to exist in the first place. Under normal circumstances gravity is by far the weakest of the four forces, but with enough mass this weakling brakes out of its chains and becomes monstrous. The force of gravity becomes so large that space curves back upon itself, every path outward becomes a path inward towards the center, where even light itself is no longer fast enough to escape the compression of space that veers to infinity, the real singularity.

As objects get closer to the event horizon, beyond which nothing but radiation, escapesthey rotate this cosmic drain faster and faster, whole stars whipping around the black hole at speeds as fasts as 93,00 miles per hour. [xiv] As matter is pulled towards absolute blackness it gives rise to quasars, the brightest objects in the universe glowing with the light of millions of suns.

Falling into a black hole really would be like something out of Carazan’s Dream, only weirder.

From the perspective of an outside observer someone slipping into a massive black hole would slow to the point of being frozen only to be vaporized. Yet the person who actually fell in wouldn’t experience anything at all except the movement ever forward towards the singularity in the same way we can only move forward in time. But what does that even mean? Here we come upon our limits: the apparent irreconcilability of quantum mechanics and general relativity, the limits of computation in finite time. [xv]

Long after the last star has burned out black holes and their weirdness will survive. The only energy source in the universe will be their sluggardly Hawking radiation until, after quadrillions of years, they too melt away. [xvi] Perhaps future civilizations will float just outside the death grip of the event horizon to siphon off the energy of the black hole at the center of the Milky Way, a mother lode with more energy than all of our galaxy’s billions of stars. [xvii] Perhaps space faring civilizations don’t head for the stars, but towards the black. [xvii] Maybe black holes are the womb of space-time giving rise to universes like and unlike our own. Or perhaps, if intelligences like ourselves survive, they will use the wonders of the abyss to create the world anew, which would mean that, in the very long run, the believers in the technological sublime were actually right after all.

[i] Levin, Janna. Black Hole Blues: and Other Songs from Outer Space. Anchor Books, a Division of Penguin Random House LLC, 2017.

[ii] There are, of course, exceptions. An excellent example of which is: Robinson, Marilynne. What Are We Doing Here? Farrar, Straus and Giroux, 2018.

[iii] Snow, C. P., and Stefan Collini. The Two Cultures. Cambridge University Press, 2014.

[iv] Fisher, Anne. The Pleasing Instructor Or Entertaining Moralist. G. Robinson, 1777. p. 143

[v] Barbauld, and Lucy Aikin. The Works of Anna Laetitia Barbauld: with a Memoir. Cambridge University Press, 2014. p.122

[vi] Burke wasn’t the first, but he was certainly the most influential figure to bring the sublime into the public discourse with his: Burke, Edmund, et al. A Philosophical Enquiry into the Origin of Our Ideas of the Sublime and Beautiful. Printed for R. and and J. Taylor, 1772.

[viii] Kant, Immanuel, et al. Critique of Judgement. Oxford Univ. Press, 2008. p. 87

[ix] Schopenhauer, Arthur. The World as Will and Representation. Vol. 1, Cambridge University Press, 2010. p.230

[x] Miller, Perry. The Life of the Mind in America. from the Revolution to the Civil War. Harcourt, Brace & World, 1970.

[xi] A good recent example of this turn against the natural sublime is: Marris, Emma. Rambunctious Garden: Saving Nature in a Post-Wild World. Bloomsbury, 2013.

[xi] A point beautifully made in: Billings, Lee. Five Billion Years of Solitude: the Search for Life among the Stars. Current, 2014.

[xii] Kurzweil, Ray. The Singularity Is near: When Humans Transcend Biology. Duckworth, 2016.

[xiii] Thomas, Jens, et al. “A 17-Billion-Solar-Mass Black Hole in a Group Galaxy with a Diffuse Core.” Nature, vol. 532, no. 7599, 2016, pp. 340–342., doi:10.1038/nature17197.

[xiv] The fastest rotation of a black hole by a star ever recorded: Kuulkers, E., et al. “MAXI J1659−152: the Shortest Orbital Period Black-Hole Transient in Outburst.” Astronomy & Astrophysics, vol. 552, 2013, doi:10.1051/0004-6361/201219447.

[xv] Harlow, Daniel, and Patrick Hayden. “Quantum Computation vs. Firewalls.” Journal of High Energy Physics, vol. 2013, no. 6, 2013, doi:10.1007/jhep06(2013)085.

[xvi] Adams, Fred, and Greg Laughlin. The Five Ages of the Universe: inside the Physics of Eternity. Touchstone, 2000.

[xvii] Lawrence, Albion, and Emil Martinec. “Black Hole Evaporation along Macroscopic Strings.” Physical Review D, vol. 50, no. 4, 1994, pp. 2680–2691., doi:10.1103/physrevd.50.2680.

[xviii] It been suggested that one solution to the Fermi Paradox is that the aliens are “hibernating” waiting for the Black Hole Era when the universe has cooled to make computation extremely efficient: Sandberg, et al. “That Is Not Dead Which Can Eternal Lie: the Aestivation Hypothesis for Resolving Fermi’s Paradox.” [1402.1128] Long Short-Term Memory Based Recurrent Neural Network Architectures for Large Vocabulary Speech Recognition, 27 Apr. 2017, arxiv.org/abs/1705.03394v1.