The last decade or so has seen a renaissance is the idea that human beings are something far short of rational creatures. Here are just a few prominent examples: there was Nassim Taleb with his The Black Swan, published before the onset of the financial crisis, which presented Wall Street traders caught in the grip of their optimistic narrative fallacies, that led them to “dance” their way right over a cliff. There was the work of Philip Tetlock which proved that the advice of most so-called experts was about as accurate as chimps throwing darts. There were explorations into how hard-wired our ideological biases are with work such as that of Jonathan Haidt in his The Righteous Mind.

There was a sustained retreat from the utilitarian calculator of homo economicus, seen in the rise of behavioral economics, popularized in the book, Nudge which took human irrational quirks at face value and tried to find wrap arounds, subtle ways to trick the irrational mind into doing things in its own long term interest. Among all of these none of the uncoverings of the less than fully rational actors most of us, no all of us, are have been more influential than the work of Daniel Kahneman, a psychologist with a Nobel Laureate in Economics and author of the bestseller Thinking Fast and Slow.

The surprising thing, to me at least, is that we forgot we were less than fully rational in the first place. We have known this since before we had even understood what rationality, meaning holding to the principle of non-self contradiction, was. How else do you explain Ulysses’ pact, where the hero had himself chained to his ship’s mast so he could both hear the sweet song of the sirens and not plunge to his own death? If the Enlightenment had made us forget the weakness of our rational souls Nietzsche and Freud eventually reminded us.

The 20th century and its horrors should have laid to rest the Enlightenment idea that with increasing education would come a golden age of human rationality, as Germany, perhaps Europe’s most enlightened state became the home of its most heinous regime. Though perhaps one might say that what the mid-20th century faced was not so much a crisis of irrationality as the experience of the moral dangers and absurdities into which closed rational systems that held to their premises as axiomatic articles of faith could lead. Nazi and Soviet totalitarianism had something of this crazed hyper-rational quality as did the Cold War nuclear suicide pact of mutually assured destruction. (MAD)

As far as domestic politics and society were concerned, however, the US largely avoided the breakdown in the Enlightenment ideal of human rationality as the basis for modern society. It was a while before we got the news. It took a series of institutional failures- 9/11, the financial crisis, the botched, unnecessary wars in Afghanistan and Iraq to wake us up to our own thick headedness.

Human folly has now come in for a pretty severe beating, but something in me has always hated a weakling being picked on, and finds it much more compelling to root for the underdog. What if we’ve been too tough on our less than perfect rationality? What if our inability to be efficient probability calculators or computers isn’t always a design flaw but sometimes one of our strengths?

I am not the first person to propose such a heresy of irrationalism. Way back in 1509 the humanist Desiderius Erasmus wrote his riotous, The Praise of Folly with something like this in mind. The book has what amounts to three parts all in the form of a speech by the goddess of Folly. The first part attempts to show how, in the world of everyday people, Folly makes the world go round and guides most of what human beings do. The second part is a critique of the society that surrounded Erasmus, a world of inept and corrupt kings, princes and popes and philosophers. The third part attempts to show how all good Christians, including Christ himself, are fools, and here Erasmus means fool as a compliment. As we all should know, good hearted people, who are for that very reason nieve, often end up being crushed by the calculating and rapacious.

It’s the lessons of the first part of The Praise of Folly that I am mostly interested in here. Many of Erasmus’ intuitions not only can be backed up by the empirical psychological studies of Daniel Kahneman, they allow us to flip the import of Kahneman’s findings on their head. Foolish, no?

Here are some examples: take the very simple act of a person opening their own business. The fact that anyone engages in such a risk while believing in their likely success is an example of what Kahneman calls an optimism bias. Overconfident entrepreneurs are blind to the fact, as Kahneman puts it:

The chances that a small business will survive in the United States are about 35%. But the individuals who open such businesses do not believe statistics apply to them. (256)

Optimism bias may result in the majority of entrepreneurs going under, but what would we do without it? Their sheer creative-destructive churn surely has some positive net effect on our economy and the employment picture, and those 35% of successful businesses are most likely adding long lasting and beneficial tweaks to modern life, and even sometimes revolutions born in garages.

Yet, optimism bias doesn’t only result in entrepreneurial risk. Erasmus describes such foolishness this way:

To these (fools) are to be added those plodding virtuosos that plunder the most inward recesses of nature for the pillage of a new invention and rake over sea and land for the turning up some hitherto latent mystery and are so continually tickled with the hopes of success that they spare for no cost nor pains but trudge on and upon a defeat in one attempt courageously tack about to another and fall upon new experiments never giving over till they have calcined their whole estate to ashes and have not money enough left unmelted to purchase one crucible or limbeck…

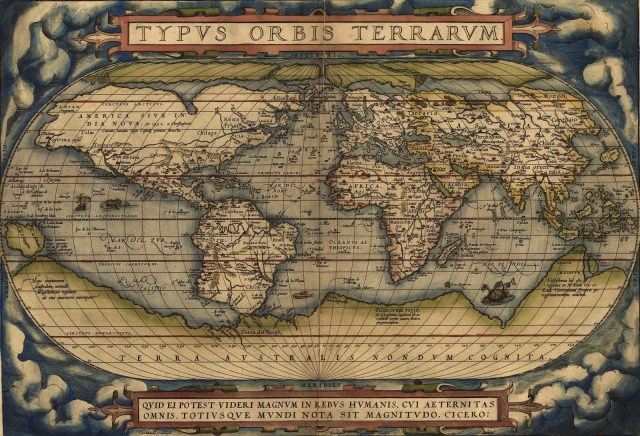

That is, optimism bias isn’t just something to be found in business risks. Musicians and actors dreaming of becoming stars, struggling fiction authors and explorers and scientists all can be said to be biased towards the prospect of their own success. Were human beings accurate probability calculators where would we get our artists? Where would our explorers have come from? Instead of culture we would all (if it weren’t already too much the case) be trapped permanently in cubicles of practicality.

Optimism bias is just one of the many human cognitive flaws Kahneman identifies. Take this one, error of affective forecasting and its role in those oh, so important of human institutions, marriage and children. For Kahneman, many people base their decision to get married on how they feel when in the swirl of romance thinking that marriage will somehow secure this happiness indefinitely. Yet the fact is, married persons, according to Kahneman:

Unless they think happy thoughts about their marriage for much of the day, it will not directly influence their happiness. Even newlyweds who are lucky enough to enjoy a state of happy preoccupation with their love will eventually return to earth, and their experienced happiness will again depend, as it does for the rest of us, on the environment and activities of the present moment. (400)

Unless one is under the impression that we no longer need marriage or children, again, one can be genuinely pleased that, at least in some cases, we suffer from the cognitive error of affective forecasting. Perhaps societies, such as Japan, that are in the process of erasing themselves as they forego marriage and even more so childbearing might be said to suffer from too much reason.

Yet another error Kahneman brings our attention to is the focusing illusion. Our world is what we attend to and our feelings towards it have less to do with objective reality than what it is we are paying attention to. Something like focusing illusion accounts for a fact that many of us in good health would find hard to believe;namely, the fact that paraplegics are no more miserable than the rest of us. Kahneman explains it this way:

Most of the time, however, paraplegics work, read, enjoy jokes and friends, and get angry when they read about politics in the newspaper.

Adaption to a new situation, whether good or bad, consists, in large part, of thinking less and less about it. In that sense, most long-term circumstances, including paraplegia and marriage, are part-time states that one inhabits only when one attends to them. (405)

Erasmus identifies something like the focusing illusion in states like dementia where the old are made no longer capable of reflecting on the breakdown of their body and impending death, but he captured it best, I think in these lines:

But there is another sort of madness that proceeds from Folly so far from being any way injurious or distasteful that it is thoroughly good desirable and this happens when harmless mistake in the judgment of the mind is freed from those cares would otherwise gratingly afflict it smoothed over with a content and faction it could not under other so happily enjoy. (78)

We may complain against our hedonic setpoints, but as the psychologists Dan Gilbert points out they not only offer us resilience on the downside- we will adjust to almost anything life throws at us- such set points should caution us that in any one thing- the perfect job, the perfect spouse lies the key to happiness. But that might leave us with a question, what exactly is this self we are trying to win happiness for?

A good deal of Kahneman’s research deals with the disjunction between two selves in the human person, what he calls the experiencing self and the remembering self. It appears that the remembering self usually gets the last laugh in that it guides our behavior. The most infamous example of this is Kahneman’s colonoscopy study where the pain of the procedure was tracked minute by minute and then compared with questions later on related to decisions in the future.

The surprising thing was that future decisions were biased not by the frequency or duration of pain over the course of the procedure but how the procedure ended. The conclusion dictated how much negative emotion was associated with the procedure, that is, the experiencing self seemed to have lost its voice over how the procedure was judged and how it would be approached in the future.

Kahneman may have found an area where the dominance of the remembering self over the experiencing self are irrational, but again, perhaps it is generally good that endings, that closure is the basis upon which events are judged. It’s not the daily pain and sacrifice that goes into Olympic training that counts so much as outcomes, and the meaning of some life event does really change based on its ultimate outcome. There is some wisdom in the words of Solon that we should “Count no man happy until the end is known”which doesn’t mean no one can be happy until they are dead, but that we can’t judge the meaning of a life until its story has concluded.

“Okay then”, you might be thinking, “what is the point of all this, that we should try to be irrational”? Not exactly. My point is we perhaps should take a slight breather from the critique of human nature from standpoint of its less than full rationality, that our flaws, sometimes, and just sometimes, are what makes life enjoyable and interesting. Sometimes our individual rationality may serve larger social goods of which we are foolishly oblivious.

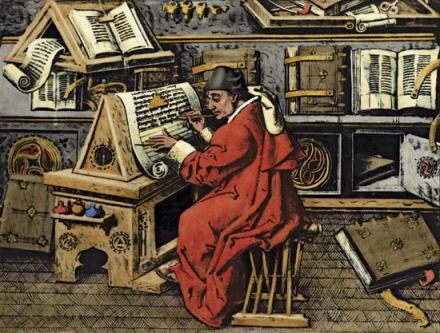

When Erasmus wrote his Praise of Folly he was clear to separate the foolishness of everyday people from the folly of those in power, whether that power be political power, or intellectual power such as that of the scholastic theologians. Part of what distinguished the fool on the street from the fool on the throne or cloister was the claim of the latter to be following the dictates of reason- the reason of state or the internal logic of a theology that argued over angels on the head of pins. The fool on the street knew he was a fool or at least experienced the world as opaque, whereas the fools in power thought they had all the answers. For Erasmus, the very certainty of those in power along with the mindlessness of their goals made them, instead, even bigger fools than the rest of us.

One of our main weapons against power has always been to laugh at the foolishness of those who wield it. We could turn even a psychopathically rational monster like Hitler into a buffoon because even he, after all, was one of us. Perhaps if we ever do manage to create machines vastly more intelligent than ourselves we will have lost something by no longer being able to make jokes at the expense of our overlords. Hyper-rational characters like DATA from Star Trek or Sheldon from the Big Bang are funny because they are either endearingly trying to enter the mixed-up world of human irrationality, or because their total rationality, in a human context, is itself a form of folly. Super-intelligent machines might not want to become flawed humans, and though they might still be fools just like their creators, likely wouldn’t get the joke.