Human beings are weird. At least, that is, when comparing ourselves to our animal cousins. We’re weird in terms of our use of language, our creation and use of symbolic art and mathematics, our extensive use of tools. We’re also weird in terms of our morality, and engage in strange behaviors visa-via one another that are almost impossible to find throughout the rest of the animal world.

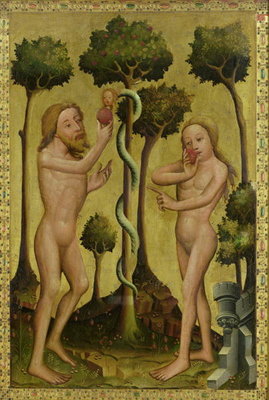

We will help one another in ways that no other animal would think of, sacrificing resources, and sometimes going so far as surrendering the one thing evolution commands us to do- to reproduce- in order to aid total strangers. But don’t get on our bad side. No species has ever murdered or abused fellow members of its own species with such viciousness or cruelty. Perhaps religious traditions have something fundamentally right, that our intelligence, our “knowledge of good and evil” really has put us on an entirely different moral plain, opened up a series of possibilities and consciousness new to the universe or at least our limited corner of it.

We’ve been looking for purely naturalistic explanations for our moral strangeness at least since Darwin. Over the past decade or so there has been a growing number of hybrid explorations of this topic combining elements in varying degrees of philosophy, evolutionary theory and cognitive psychology.

Joshua Greene’s recent Moral Tribes: Emotion, Reason, and the Gap Between Us and Them is an excellent example of these. Yet, it is also a book that suffers from the flaw of almost all of these reflections on singularity of human moral distinction. What it gains in explanatory breadth comes at the cost of a diminished understanding of how life on this new moral plain is actually experienced the way knowing the volume of water in an ocean, lake or pool tells you nothing about what it means to swim.

Greene like any thinker looking for a “genealogy of morals” peers back into our evolutionary past. Human beings spent the vast majority of our history as members of small tribal groups of perhaps a few hundred members. Wired over eons of evolutionary time into our very nature is an unconscious ability to grapple with conflicting interests between us as individuals and the interest of the tribe to which we belong. What Greene calls the conflicts of Me vs Us.

When evolution comes up with a solution to a commonly experienced set of problems it tends to hard-wire that solution. We don’t think all at all about how to see, ditto how to breathe, and when we need to, it’s a sure sign that something has gone wrong. There is some degree of nurture in this equation, however. Raise a child up to a certain age in total darkness and they will go blind. The development of language skills, especially, show this nature-nurture codependency. We are primed by evolution for language acquisition at birth and just need an environment in which any language is regularly spoken. Greene thinks our normal everyday “common sense morality” is like this. It comes natural to us and becomes automatic given the right environment.

Why might evolution have wired human beings morally in this way, especially when it comes to our natural aversion to violence? Greene thinks Hobbes was right when he proposed that human beings possess a natural equality to kill the consequence of our evolved ability to plan and use tools. Even a small individual could kill a much larger human being if they planned it well and struck fast enough. Without some in built inhibition against acts of personal aggression humanity would have quickly killed each other off in a stone age version of the Hatfields and the McCoys.

This automatic common sense morality has gotten us pretty far, but Greene thinks he has found a way where it has also become a bug, distorting our thinking rather than helping us make moral decisions. He thinks he sees distortion in the way we make wrong decisions when imagining how to save people being run over by runaway trolley cars.

Over the last decade the Trolley problem has become standard fair in any general work dealing with cognitive psychology. The problem varies but in its most standard depiction the scenario goes as follows: there is a trolley hurtling down a track that is about to run over and kill five people. The only way to stop this trolley is to push an innocent, very heavy man onto the tracks. A decision guaranteed to kill the man. Do you push him?

The answer people give is almost universally- no, and Greene intends to show why most of us answer in this way even though, as a matter of blunt moral reasoning, saving five lives should be worth more than sacrificing one.

The problem, as Greene sees it, is that we are using our automatic moral decision making faculties to make the “don’t push call.” The evidence for this can be found in the fact that those who have their ability to make automatic moral decisions compromised end up making the “right” moral decision to sacrifice one life in order to save five.

People who have compromised automatic decision making processes might be persons with damage to their ventromedial prefrontal cortex, persons such as Phineas Gauge whose case was made famous by the neuroscientist, Antonio Damasio in his book Descartes’ Error. Gauge was a 19th century miner who after suffering an accident that damaged part of his brain became emotionally unstable and unable to make good decisions. Damasio used his case and more modern ones to show just how important emotion was to our ability to reason.

It just so happens that persons suffering from Gauge style injuries also decide the Trolley problem in favor of saving five persons rather than refusing to push one to their death. Persons with brain damage aren’t the only ones who decide the Trolley problem in this way- autistic individuals and psychopaths do so as well.

Greene makes some leaps from this difference in how people respond to the Trolley problem, proposing that we have not one but two systems of moral decision making which he compares to the automatic and manual settings on a camera. The automatic mode is visceral, fast and instinctive whereas the manual mode is reasoned, deliberative and slow. Greene thinks that those who are able to decide in the Trolley problem to push the man onto the tracks to save five people are accessing their manual mode because their automatic settings have been shut off.

The leaps continue in that Greene thinks we need manual mode to solve the types of moral problems our evolution did not prepare us for, not Me vs. Us, but Us vs. Them, of our society in conflict other societies. Moral philosophy is manual mode in action, and Greene believes one version of our moral philosophy is head and shoulders above the rest, Utilitarianism, which he would prefer to be called Deep Pragmatism.

Needless to say there are problems with the Trolley Problem. How for instance does one interpret the change in responses to the problem when one substitutes a push with a switch? The number of respondents who chose to send the fat man to his death on the tracks to save five people significantly increases when one exchanges a push for a switch of a button that opens a trapdoor. Greene thinks it is because giving us bodily distance allows our more reasoned moral thinking to come into play. However, this is not the conclusion one gets when looking at real instances of personal versus instrumentalized killing i.e. in war.

We’ve known for quite a long time that individual soldiers have incredible difficulty killing even fellow enemy soldiers on the battlefield a subject brilliantly explored by Dave Grossman in his book On Killing: The Psychological Cost of Learning to Kill in War and Society. Strange thing is, whereas an individual soldier has problems killing even one human being who is shooting at him, and can sustain psychological scars because of such killing, airmen working in bombers who incinerate thousands of human beings including men women and children do not seem to be affected to such a degree by either inhibitions on killing or its mental scars. The US military’s response to this is to try to instrumentalize and automaticize killing by infantrymen in the same way killing by airmen and sailors is disassociated. Disconnection from their instinctual inhibitions against violence allows human being to achieve levels of violence impossible at an individual level.

It may be the persons who chose to save five persons at the cost of one aren’t engaging in a form of moral reasoning at all they are merely comparing numbers. 5 > 1, so all else being equal choose the greater number. Indeed, the only way it might be said that higher moral reasoning was being used in choosing 5 over 1 was if the 1 that needed to be sacrificed to save 5 was the person being asked. With the question being would you throw yourself on the trolley tracks in order to save 5 other people?

Our automatic, emotional systems are actually very good at leading us to moral decisions, and the reasons we have trouble stretching them to Us vs Them problems might be different than the ones Greene identifies.

A reason the stretching might be difficult can be seen in a famous section in The Brother’s Karamazov by Russian novelist, Fyodor Dostoyevsky. “The Grand Inquisitor” is a section where the character Ivan is conveying an imaginary future to his brother Alyosha where Jesus has returned to earth and is immediately arrested by authorities of the Roman church who claim that they have already solved the problem of man’s nature: the world is stable so long as mankind is given bread and prohibited from exercising his free will. Writing in the late 19th century, Dostoyevsky was an arch-conservative, with deep belief in the Orthodox Church and had a prescient anxiety regarding the moral dangers of nihilisms and revolutionary socialism in Russia.

In her On Revolution, Hannah Arendt pointed out that Dostoyevsky was conveying with his story of the Grand Inquisitor, not only an important theological observation, that only an omniscient God could experience the totality of suffering without losing sight of the suffering individual, but an important moral and political observation as well- the contrast between compassion and pity.

Compassion by its very nature can not be touched off by the sufferings of a whole group of people, or, least of all, mankind as a whole. It cannot reach out further than what is suffered by one person and remain what it is supposed to be, co-suffering. Its strength hinges on the strength of passion itself, which, in contrast to reason, can comprehend only the particular, but has no notion of the general and no capacity for generalization. (75)

In light of Greene’s argument what this means is that there is a very good reason why our automatic morality has trouble with abstract moral problems- without having sight of an individual to which moral decisions can be attached one must engage in a level of abstraction our older moral systems did not evolve to grapple with. As a consequence of our lack of God-like omniscience moral problems that deal with masses of individuals achieve consistency only by applying some general rule. Moral philosophers, not to mention the rest of us, are in effect imaginary kings issuing laws for the good of their kingdoms. The problem here is not only that the ultimate effect of such abstract level decisions are opaque due to our general uncertainty regarding the future, but there is no clear and absolute way of defining for whose good exactly are we imposing these rules?

Even the Utilitarianism that Greene thinks is our best bet for reaching common agreement regarding such rules has widely different and competing answers to how we should answer the question “good for who?” In their camp can be found Abolitionist such as David Pierce who advocate the re-engineering of all of nature to minimize suffering, and Peter Singer who establishes a hierarchy of rational beings. For the latter, the more of a rational being you are the more the world should conform to your needs, so much so, that Singer thinks infanticide is morally justifiable because newborns do not yet possess the rational goals and ideas regarding the future possessed by older children and adults. Greene, who thinks Utilitarianism could serve as mankind’s common “moral currency”, or language, himself has little to say regarding where his concept of Utilitarianism falls on this spectrum, but we are very far indeed from anything like universal moral agreement on the Utilitarianism of Pierce or Singer.

21st century global society has indeed come to embrace some general norms. Past forms of domination and abuse, most notably slavery, have become incredibly rare relative to other periods of human history, though far from universal, women are better treated than in ages past. External relations between states have improved as well the world is far less violent than in any other historical era, the global instruments of cooperation, if far too seldom, coordination, are more robust.

Many factors might be credited here, but the one I would least credit is any adoption of a universal moral philosophy. Certainly one of the large contributors has been the increased range over which we exercise our automatic systems of morality. Global travel, migration, television, fiction and film all allow us to see members of the “Them” as fragile, suffering, and human all too human individuals like “Us”. This may not provide us with a negotiating tool to resolve global disputes over questions like global warming, but it does put us on the path to solving such problems. For, a stable global society will only appear when we realize that in more sense than not there is no tribe on the other side of us, that there is no “Them”, there is only “Us”.

[…] that robotics ethicists face today have more in common with the “Trolley Problem” when a machine has to make a decision between competing goods rather than contradicting […]

[…] into human thinking about moral topics. Bloom and Haidt are two of the best, but there’s also Joshua Green and, although covering quite different topics Daniel Kahneman both of whom I’ve written about […]