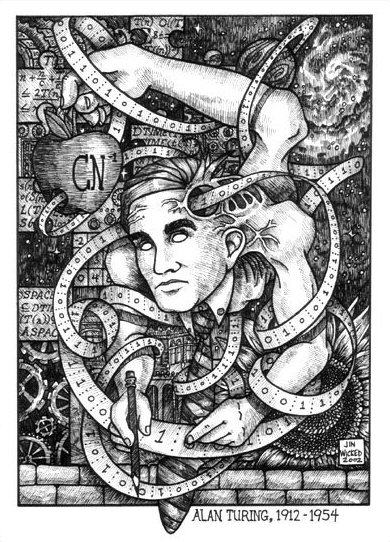

Two-Thousand-and-twelve marks the centenary of Alan Turing’s birth. Turing, of course, was a British mathematical genius who was preeminent among the “code -breakers”, those who during the Second World War helped to break the Nazi Enigma codes and were thus instrumental in helping the Allies win the war. Turing was one of the pioneers of modern computers. He was the first to imagine the idea of what became known as a Universal Turing Machine, (the title of the drawing above) the idea that any computer was in effect equal to any other and could, therefore, given sufficient time, solve any problem whose solution was computable.

In the last years of his life Turing worked on applying mathematical concepts to biology. Presaging the work of, Benoît Mandelbro, he was particularly struck by how the repetition of simple patterns could give rise to complex forms. Turing was in effect using computation as a metaphor to understand nature, something that can be seen today in the work of people such as Steven Wolfram in his New Kind of Science, and in fields such as chaos and complexity theory. It also lies at the root of the synthetic biology being pioneered, as we speak, by Craig Venter.

Turning is best remembered, however, for a thought experiment he proposed in a 1950 paper Computing Machinery and Intelligence, that became known as the “Turing test”, a test that has remained the pole star for the field of artificial intelligence up until our own day, along with the tragic circumstances the surrounded the last years of his life.

In my next post, I will provide a more in-depth explanation of the Turing test. For now, it is sufficient to know that the test proposes to identify whether a computer possesses human level intelligence by seeing if a computer can fool a person into thinking the computer is human. If the computer can do so, then it can be said, under Turing’s definition, to possess human level intelligence.

Turing was a man who was comfortable with his own uniqueness, and that uniqueness included the fact that he was homosexual at a time when homosexuality was considered both a disease and a crime. In 1952, Turning, one of the greatest minds ever produced by Britain, a hero of World War Two, and a member of the Order of the British Empire was convicted in a much publicized trial for having engaged in homosexual relationship. It was the same law under which Oscar Wilde had been prosecuted almost sixty years before.

Throughout his trial, Turing maintained that he had done nothing wrong in having such a relationship, but upon conviction faced a series of nothing- but- bad options, the least bad of which he decided was hormone “therapy” as a means of controlling his “deviant” urges, a therapy he chose to voluntarily undergo given the alternatives which included imprisonment.

The idea of hormone therapy represented a very functionalist view of the mind. Hormones had been discovered to regulate human sexual behavior, so the idea was that if you could successfully manipulate hormone levels you could produce the desired behavior. Perhaps part of the reason Turing chose this treatment is that it had something of the input-output qualities of the computers that he had made the core of his life-work. In any case, the therapy ended after the period of a year- the time period he was sentenced to undergo the treatment. Being shot through with estrogen did not have the effect of mentally and emotionally castrating Turing, but it did have nasty side effects such as making him grow breasts and “feminizing” his behavior.

Turing’s death, all evidence appears to indicate, came at his own hand, ingesting an apple laced with poison in seeming imitation of the movie Snow White. Why did Turing commit suicide, if in fact he did so? It was not, it seems, an effect of his hormone therapy, which had ended quite some time before. He did not appear especially despondent to his family or friends in the period immediately before his death.

One plausible explanation is that, given the rising tensions of the Cold War, and the obvious value Turing had as an espionage target, Turing realized that on account of his very public outing, he had lost his private life. Who could be trusted now except his very oldest and deepest friends? What intimacy could he have when everyone he met might be a Soviet spy meant to seduce what they considered a vulnerable target, or an agent of the British or American states sent there to entrap him? From here on out his private life was likely to be closely scrutinized: by the press, by the academy, by Western and Soviet security agencies. He had to wonder if he would face new trials, new tortuous “treatments”, to make him “normal” perhaps even imprisonment.

What a loss of honor for a man who had done so much to help the British win the war the reason, perhaps, that Turing’s death occurred on or very near the ten year anniversary of the D-Day landing he had helped to make possible. What outlets could there be for a man of his genius when all of the “big science” was taking place under the umbrella of the machinery of war, where so much of science was regarded as state secrets and guarded like locked treasures and any deviation from the norm was considered a vulnerability that needed to be expunged? Perhaps his brilliant mind had shielded him from the reality of what had happened and his new situation after his trial, but only for so long, and that once he realized what his life had become the facts became too much to bear.

We will likely never truly know.

The centenary of Turing’s birth has been rightly celebrated with a bewildering array of tributes, and remembrances. Perhaps, the most interesting of these tributes, so far, has been the unveiling of a symphony composed entirely by a computer, and performed by the London Symphony Orchestra on July, 2, 2012.. This was a piece (really a series of several pieces) “composed” by a computer algorithm- Iamus, and entitled: “Hello World!”

Please take a listen to this piece by clicking on the link below, and share with me your thoughts. Your ideas will help guide my own thoughts on this perplexing event, but my suspicion right now is that “Hello World!” represents something quite different than what either its sympathizers or distractors suggest, and gives us a window into the meaning and significance of the artificial intelligences we are bringing into what was once our world alone.

Good thoughts on Turing. I thought I would share with you a post in Quantum Moxie, a physics blog by my good friend Ian T. Durham. In it he discusses the possibility that Turing’s death was accidental.

Thanks for the great link. I think it’s totally conceivable that Turing’s death was an accident: he showed no signs of depression in the lead up to his death to either his therapist or his family and friends.

For me, the biggest clue in terms of suicide was his supposed fascination with the poison apple scene from Snow White- what a bizarre coincidence!

Turing was also smart enough to leave us with ambiguity. Sadly, I think we’ll never truly know.

Why the ‘Chinese Room’? Is it a reference to John Searle’s Chinese Room? (just curious about the title)

Regarding Turing’s death, I must say I’m getting quite sick of the lack of historical understanding on this matter. Let me briefly explain. A few weeks ago, plastered all over my facebook posts from ‘friends’ are posters (and I use the word, ‘poster’, liberally) of the face of Alan Turing and the words in big fonts explaining how his death was the result of the persecution he suffered as a gay man. I’m glad I read your blog which offered a much more nuanced perspective on this matter.

Not that I don’t believe his death was a result of the (some might argue not so much) persecution he suffered as a gay man but the loss of historical contexts which often gets filtered through our contemporary understanding of the world, and in the process, mythologises or even trivialises his death (i.e. applying our own yardsticks to another era).

In any case, I’m more concerned about what he has written which ironically, most of these ‘facebook posters’ failed to mention. His ideas such as the Turing Test remains the holy grail of Artificial Intelligence. But I won’t comment on that since I know you will be blogging that for your next post. Looking forward to it.

Charles,

Part 2 of this post will indeed discuss John Searle- hence the title. I myself was uncertain as to the relationship between Turing’s persecution as a gay man and his suicide until I did some research into the question.

None of this is to discount the fact that his persecution was real. Or let me put it this way: how would you feel if the choice you faced was between prison or the ingestion of estrogen that made you grow breast while essentially being shamed into having sexual relations with a sex you weren’t attracted to?

Times have changed, thank God, but the importance of Turing, as you point out, goes well beyond that, and that importance will be the subject of my future post.

I find the music rather unsatisfying. Perhaps we should ask the “composer” about his/her influences and why he/she decided to compose this piece.

But was it really created by a computer? It sounds a little like some of my own primitive efforts with Garage Band. As long as I stick to the same key (or close to it) and throw in the right amount of effects that the app can generate the result will come out sounding vaguely like a composition of some sort (mostly not very good but still sounding vaguely like music even though I know nothing of musical composition). How do we know it wasn’t really composed by human? Did a human pick the instruments, the key, the tempo? Did a human program the computer to pick randomly from a range of selections based on rules and restrictions? Did a human write the program, turn on the computer, and trigger the computer to compose?

All great questions James. I hope to answer most of them on my next post which I hope to have up by the end of the week.

I found the composition unsatisfying as well, but much of that might be the result of its abstract style a style used by many contemporary composers, and something a lot of people don’t like.

James,

I copied and pasted what you find below from the draft of the post I intend to do this weekend.

I think this addresses your questions and also, I think, expands on the comments offered by Dan Fair.

The composition Hello World! was created using a very particular form of algorithm (a process or set of rules to be followed in calculations or other problem-solving operations) known as a genetic algorithm, or, in other words Iamus is a genetic algorithm. In very over-simplified terms a genetic algorithm works like evolution. There is (a) a “population” of randomly created individuals (in Iamus’ case it would be sounds from a collection of instruments). Those individuals are the selected against (b) an environment for the condition of best fit (I do not know in Iamus’ case if this best fit was the judgement of classical trained humans, some selection of previous human created compositions, or something else), the individual that survive (are chosen to best meet the environment) are then combined to form new individuals (compositions in Iamus’ case) (c) an element of random features is introduced to individuals along the way to see if they help individuals better meet the fit. The survivor that best meets the fit is your end result.

Dan, I did not say “living forms”, I said “Forms of Life”. There is a vers strong difference between these notions. Secondly, albeit I superficially agree that “intelligence” is not an attribute that could be considered being exclusively human, the concept of “intelligent” is completely unsuitable to speak about such issues that are showing up here.

Stanislaw Lem once (deep in the 1950ies I guess) wrote a very nice piece titled “Personetics”. It is about the “simulation” of the evolution a population of entities, that develop their own language. Once, these entities started to think about “god”, and the issue of the transcendental “creator”. Lem’s story is about a guy reviewing a book where the inventor describes his difficulties to switch off the machine. Despite some fundamental impossibilities, Lem got one point right: language and the ability for autonomous thought need a social embedding. ONE super-hyper-mega computer alone will NEVER develop any kind of autonomy beyond slime moulds.

(I think more is to come about that)

The whole issue is very tricky and difficult, though. Too difficult for short remarks like this one. Thus I started once to collect more or less exact arguments about that stuff on my blog (“The Putnam Program”). Of course, it does not cover all aspects, but I think quite some of them.

(the comment to Dan should have been placed below…

Rick

genetic algorithms pretend something that they can’t achieve.

The label is almost pure propaganda. It has absolutely nothing to do with the genetics of living organisms. It does definitely NOT work like evolution. Evolution does not optimize. There is no “result” in an evolution.To think so is naive, or some kind of applied reductionism. So-called G.A. do nothing but recombinant optimization in an apriori defined problem space. There is no cell together with its recoding, its emergence properties, its post-translational processes, its meta-stability due to the immaterial “self-organized” networks, even the cell’s ability to learn how to transcribe….

Actually, in organisms we even can’t find something like a “gene”. As Evelyn Fox-Keller lucidly described, the gene is more ideology than scientific concept. In the beginning, from 1940ies (Avery) to early 1960ies (Monod, Jacob) it has been helpful, but even in these times it was ideologically distorted (cybernetism).

Holland (the “inventor” of g.a.) implemented the myth of these days, the one-gene-one-function dogma, which meanwhile has been dropped completely (fortunately so). It is as you said: it is an algorithm, which has been defined as a rule that produces a stable result in finite time in a finite machine. Then it stops.Is that evolution? Or “like” evolution? I guess, it is not.

Once we see that it is an algorithm for optimization we should ask: Which concept has been optimized by it? Who set the goal? The criteria? The fitness dynamics?

James, I fully agree. Also, all your question point to the right issue: the Lebenswelt (as coined by Wittgenstein), or background (as coined by Searle). In order to “compose” the software first needs to understand. I definitely would reject that it is not possible, though. Yet, current main stream and side streams of “artificial intelligence” (a nonsense term in itself) do not allow for anything like writing, or composing.

Composing, as writing, are forms of using a language. Indeed, it is a pandemic disease (drawing on Wittgenstein again) to forget about language, to believe in private language (as the programmer of the software which arranged the tones obviously did), or to think that meaning can be created. As long as this pandemia prevails, we will not see anything “interesting” with respect to computers.

cheers

I have some questions that you might be able to clarify for me, Monoo. 1) I am only superficially familiar with the Wittgenstein’s concept of the Lifeworld. What I am curious about is this: In what sense would machines not be said to participate in our Lifeworld? They, after all, share in our history, part of our language-mathematics- etc. Do animals possess a Lifeworld, or is this limited to humans? If animals do possess some version of a Lifeworld why would machines that share animal-like capacities have their own as well?

You say that AI does not allow for anything like writing. 2) What are your thoughts on something like this:

http://bigthink.com/ideafeed/two-years-til-algorithms-write-news-articles-say-software-developers

There is probably one key site in the philosophical investigations (buy it, and read it for the rest of your life :), §201. It is about rule-following. Obviously, in speaking=using language we follow rules, and also in organizing our social life. Yet, what are rules? Well, pretty obvious, we have some symbols and some operators. They have to be set up by someone, or as a matter of fact, by a community.

The question is: how do we come to follow the rule *correctly*? Well, we don’t always, that’s part of the “rule”: it is not a code. There are exceptions. Anyway, it seems that most of the time we know how to follow a rule. And here there is a “paradox”: We would need further rules, ad infinitum. Thus Wittgenstein says, analysis and explaining must have an end. Yet, we DO follow rules. Hence, he says, we can’t pretend to follow a rule. There is no explicable justification to follow a rule, or how to follow a rule. We just do. The “reason” that we can do is in the Form of Life. Everything belongs to it: all concepts, the way of living, the perception we can have (they are dependent on concepts and theories), how we “negotiate” social interactions, ie how we ascribe and assign roles to each other, how we are going to interpret concepts, how we deal with pets, and so on. The whole mesh, the whole “mess”, as scientists all too quickly think.

Machines: as long as they are deterministic machines, they could be conceived as part of our Form of Life.Yet, even a hammer is not passive. Any tool has some agency, even more so if the tool invades the “space of information”. Yet, in order to develop their own Form of Life, they need full autonomy. Well, that’s not so difficult to achieve, but it needs a paradigm that doe NOT follow that of the turing machine. Again Wittgenstein: There is no such thing as a private language. There is no analytic description of language, in principle! And: meaning is neither in the head, nor is it a mental entity at all!!! We do not have words and language in the head and attach meaning to it, Wittgenstein says. To think otherwise, he calls it a disease.

So, for being able to write, the machine should be to take part in our social life autonomously. Not as tool, of course. To paraphrase it: machines will be able to write something if they know whether an act is morally allowed. Yet, is it still a “machine” in this case? We will find some matter (of course), we can dissect it, describe its elements, etc. But what do we describe by doing that?

Most animals, except grey parrots, dolphins, orcas, chimpanzees and the like, do not develop culture. Yet, even my cat knows that it is not allowed to perform some acts (stealing the meat if I am not looking to her) But she is not able to sustain that, hence she can’t symbolize it, hence she can’t tell it another cat. Cats can’t write. Nevertheless, in some limited manner, cats own their Forms of Life. We can’t know how it is to feel like a cat, to perceive like it etc. We even can’t know from each other as humans. That’s the reason why culture requires the externalization of symbols, if not language.

Developing/evolving the possibility for Forms of Life needs externalized symbols. But we certainly can’t conclude (like you seem to do) from animal-likeness to the possibility to share knowledge about mental content. Such sharing is not possible, even for us, as we a parts and contributors of our Form of Life.

So I stop now, immediately, before writing another 500 pages :))

Hope this clarified the issue at least a bit!

cheers

Great points, Monoo. I hope I can fully address them in my next post- should be up before this Sunday 7/15.

Question: are living forms a product of human intelligence? Not really. And the creator (if any) of such complex structures could indeed be called intelligent (at least the Intelligent Design doctrine -and the hordes of followers- thinks that way). I don’t believe that intelligence is a human attribute and, consequently, it CAN expand the human limits. Life on earth is but one example. The creators of Iamus claim that they model biological evolution and genetics. Is a supercomputer room enough to develop intelligently created forms?, to generate a complexity that goes beyond the programmers’ expectations? Well, may be.

Excellent points, Dan. The way the programmers used evolutionary techniques to create Iamus I tried to explain in my reply to James Cross above.

Agreed, Rick. Complexity can definetely evolve from evo-devo approaches, especially if you consider that the complexity of a musical piece is at no point comparable with that of a living being, not even with unicellular forms.

Monoo and Dan,

Thank you for your extensive comments, Monoo, but respectfully, I’ll side with Dan on this one.

Monoo, in some ways I think you’re right that genetic algorithms are a misnomer, but if they aren’t a pale reflection of Natural Selection, they certainly resemble ARTIFICIAL SELECTION in which human beings select for certain traits.

To say that Genetic Algorithms are based on our understanding of evolution is not the same thing as saying they match the complexity found in actual evolution. And the idea of equating the concept of genes with an ideology seems a little dangerous to me in an intellectual sense. Everything in science is a convenient fiction and an artificial border. The question is, is this map, this way of looking at the world, helpful? The great thing about science is that sooner or later it explodes our oversimplifications, and I agree with you that the fading away of the reductionist one-gene-one behavior view was fortunate, and this not just from a scientific viewpoint, but from a moral one in that in would have likely encouraged all sorts of dangerous tinkering with human biology.

Lastly, because of the expanding role of robots in warfare today there are growing calls for them to be programmed with a set of ethical rules e.g. avoiding harm to civilians etc. I wonder how you understand this in light of your understanding of the importance of “rules”?

Rick

sorry for the impression that I have attacked science… there is only a quite narrow zone between collective obsession and ideology. Regarding the GA it is somewhat strange. Holland certainly just applied a simplification, using “nature” as kind of a template. No problem. The problem with marketing aspect is that today many people from computer sciences indeed think that the GA are “similar” to natural processes in the cell. Thereby they even don’t see the opportunities and the risks of their approach. The history of the concept of gene shows that in the beginning it also certainly was not born by ideology. Later, however, in the 1970ies and esp 1980ies the defense of that idea acquired ideological flavor. Today, geneticists themselves call the idea of the gene as it was abundant in that time a “dogma”.

The point with rules and warfare robots is indeed interesting, and surely not trivial. You certainly know Asimovs 3 golden rules of robotics. Do you know the cinema film “I, Robot” as well? There the 3 golden rules lead to a revolution of robots against people in the name of security. The DVD has nice bonus material about that… IOW, the rules fail completely while the robots still obey to them perfectly. The (abstract) reason is pretty clear: Logic is never exact as soon as we apply it. (another result of Wittgenstein). As soon as we apply it we import some semantics into it. Because people think it is exact, that semantics is not declared or made explicit. The logical consequence is failure of “logic”, or more precise, the disappointment of expectation mistakingly addressed to logic

Back to your warfare bots. If a robot should understand language, it should know about the morals, I said. Only then, it could be able to understand and to produce language. From here only one conclusion is possible about the warfare bots (as far as they indeed work): military contexts do neither resemble normal life nor do they need a language. This, of course, is quite plausible. We have a strict hierarchy, where any officer is allowed to talk only to next lower level. This excludes social complexity. Once complexity is banned, language is not needed any more. Everything is an instruction, a command. Since the social environment is highly determined, “everything” can be pre-programmed. This, however, is possible only for “clear” and unambiguous situations.

Such a warfare robot is little more than an ant (mentally, I mean), carrying weapons, stuffed with some sensory, listening to “keywords” (it is not exactly words, it is again a precise code). Walking around, identifying soldiers of the enemy (by means of infrared), and shooting them are all physical exercises. Thus a warfare robot could be much more primitive than a robot that need to provide free-ranging service on a language basis in a city. As far as I know that’s precisely the strategy of the military doing research about it.

Understanding to not harm civilians again requires background knowledge, or to listen unconditionally to the sergeant. Again, that’s not too unfamiliar for a low rank soldier. If the bot should decide autonomously, he could not, for sure. But I doubt that a robot could learn that. Even humans have certain inabilities concerning that.

Understanding language can be learnt only upon education. Whether we educate the famous grey parrot “alex”, a dog, a chimpanzee, a child, or a “machine”. btw, precisely this (*education”) is the research strategy of the robotics group in hertfordshire. Saying this, and recalling that military is pervert or responding to perverted situations, then of course one can think that a bot is educated strictly within military “norms”, ie. to a perverted entity. But the problem demonstrated by “I, Robot” remains. The need for education implies autonomy, as soon as the bots are autonomous, not even the bot itself can “explain” his actions in a complete manner any more. It has to share the values of its social environment (orthoregulation), which can’t be preprogrammed etc etc.

Somehow this makes me optimistic that warfare robots are either not possible, or that they remain on the ant level (which indeed needs a lot of optimism, in both cases), or that they will deny military service instead of being trained … which leads to the possibility of robots gone mad due to war…

cheers

Monoo,

Thank you again for your extensive response. I think the crux of our differences is the consequence of our different understanding of complexity. Two examples you used can help me to demonstrate this. You use an ant as an example of simplicity. Yes, an ant is a simple creature but an ant colony is actually incredibly complex. You also use an army as an example of simplicity, and yes this is true and the level of the individual, but is an army, or an actual war really simple? I do not think so. In my understanding very complicated things indeed can emerge from a much simpler layer underneath. Benoît Mandelbro showed us how some of the most complex patterns found in nature can be created through the application of a very simple rule- which is something very similar to an algorithm.

As an aside: you might enjoy or have something interesting to add on the robot/warfare question which I tried to explore in this prior post:

All that said, I think the both of us are in broad agreement that there is something very special in our human ability to have discussions such as this and to take responsibility for the world we live in with our choices. We are also, I am sure, in total agreement that we need to philosophically engage with these questions.

So lets keep talking and engaging within the contexts of our very different blogs and from our unique perspectives.

Hi Rick,

I agree completely to what you say about colonies of ants and “war”. Yes, this entities and processes are complex. My understanding of complexity I have described detailed in published papers and on the blog, and I think it goes beyond the common usage.

Yet, we talked about the warfare robot. That’s an individual. If we take an individual ant, it is in some way “simple”, comparatively. Well I know definitely (I am a biologist by education) that even a single ant is “complex”, but its behavior appears rather limited. Again, they do have memory, associative capabilities etc. At least, there is no language, just contextual reactions. The influence from the outside, communal commands if you like, are very strong though pheromones and the like.

Only in this respect I called them “simple”. Precisely this aspect I argued to be present in warfare bots too. I even would say that all the robotics is today is just on the level of even simple ants. I doubt that this direction leads to anything more interesting. After 220 mio years of natural history they did not start talking.

Machines can simulate intelligence because they are extensions of humans but that doesn’t mean they are intelligent. Computers are tools differing from other tools like shovels, picks, and rakes only in their degree of sophistication and complexity.

Iamus was programmed by a human who first made the decision to create a composing computer then established the rules by which the program would operate to create its inventory of sounds. Presumably a human also initiated the program that caused Iamus to create a composition in honor of Turing. At what point in this process did the computer compose on its own rather than merely obey rules established by a human? I would say it never did.

Ultimately the question becomes whether intelligence is purely binary? Can intelligence be represented completely by algorithm?

My hunch is no but I am not 100% certain of it. I do feel that if it is possible we are still quite a ways from achieving it.

If I could write a truly intelligent program, then my program itself should be able to create another intelligent program. Would the program created by my program be the same or different from the one I created? Could it be more intelligent than the one I created? Or is intelligence one single program?

James,

your suggestions and attitudes perfectly demonstrate why “intelligence” is a concept that is not fruitful in any regard. This is a direct consequence of its history. Intelligence is a buzzword for the operationalization of instrumental behavior. It more than clear that this creates pseudo-problems if we apply it to the richness of mental life, whether humans or machines. As a theoretical term it is simply unusable, even contra-productive.

These capabilities needed for a mental life can not be reduced to “problem solving”. Certainly not, of course. (which is/was one of the misconceptions in traditional AI). The mental and meaning can’t be located in the brain! Here I would like to recommend the superb book by David Blair “Wittgenstein, Information: back to the rough ground”.

It is impossible to pretend to follow rule (Wittgenstein). Thus it is impossible to simulate “mental capacities”, let alone be “intelligence”.

Next, in a general common sense meaning “intelligence” means the potential for adaptivity in situations never met before. So, how would you program that? An algorithm is closed a priori and by definition. You can’t program what do don’t know. Simply that. The only thing you can do is to provide the potential to develop the capacity for learning. And this can’t be achieved by algorithms either.

Hi James,

Again, all great questions. I think not so much the claims as the unspoken assumptions behind the claims of the creators of Iamus were pure showmanship.

Nevertheless, things are moving incredibly fast and it seems we already have software that creates other software:

http://money.cnn.com/2007/08/27/technology/intentional_software.biz2/index.htm

I do not think we are necessarily headed towards a world where the intelligence of digital computers, if we should call it that, is wholly distinct from human intelligence. Your earlier “garage band” example and my own experience using consumer algorithms such as Pandora put me in mind of a scenario like the one below that, I think, if we don’t see it, then are children are very likely to.

Imagine a rock concert where the concert goers place on themselves a kind of device that measures their physiological response to music. They would in fact be connected to a very advanced, by our standards, AI that would be able to produce a variety of simulated instruments.

The AI would compose/perform music on the fly based upon how the crowd reacts. The music would start out quite chaotic but based on the physiological feedback of the concert goers would become more and more ordered and beautiful.

This is merely a science-fiction scenario and perhaps impossible for a crowd of concert goers- there is just too much diversity in reactions. It becomes much less inconceivable, I think, if we imagine the same scenario with only one individual connected to such an AI.

For better or worse, that to me seems to be the direction we are currently headed.

[…] This point was also made, and more extensively by Martin Ford in his Lights at the End of the Tunnel. Advanced algorithms now effectively run our financial markets, and this despite their corrosive effects on the public will expressed through democracy. Intelligent machines are now increasingly called upon to fight our wars despite the ethical and political implications of using such machines in this way. Artificial intelligence can now win trivia games, or more disturbingly for some, write symphonies. […]

[…] to automate almost any formerly human process from the most intellectually challenging – classical composition or scientific discovery to the most simple production procedure. The capacity of machines to do […]

[…] closely resembles the mathematics of intelligent machines has been the first to fall here. As only one example: in 2012 on the centenary of Alan Turing’s birth the London Symphony Orchestra performed Hello […]